Locust MCP Server

An MCP server for running Locust load tests. Configure test parameters like host, users, and spawn rate via environment variables.

🚀 ⚡️ locust-mcp-server

A Model Context Protocol (MCP) server implementation for running Locust load tests. This server enables seamless integration of Locust load testing capabilities with AI-powered development environments.

✨ Features

- Simple integration with Model Context Protocol framework

- Support for headless and UI modes

- Configurable test parameters (users, spawn rate, runtime)

- Easy-to-use API for running Locust load tests

- Real-time test execution output

- HTTP/HTTPS protocol support out of the box

- Custom task scenarios support

🔧 Prerequisites

Before you begin, ensure you have the following installed:

- Python 3.13 or higher

- uv package manager (Installation guide)

📦 Installation

- Clone the repository:

git clone https://github.com/qainsights/locust-mcp-server.git

- Install the required dependencies:

uv pip install -r requirements.txt

- Set up environment variables (optional):

Create a

.envfile in the project root:

LOCUST_HOST=http://localhost:8089 # Default host for your tests

LOCUST_USERS=3 # Default number of users

LOCUST_SPAWN_RATE=1 # Default user spawn rate

LOCUST_RUN_TIME=10s # Default test duration

🚀 Getting Started

- Create a Locust test script (e.g.,

hello.py):

from locust import HttpUser, task, between

class QuickstartUser(HttpUser):

wait_time = between(1, 5)

@task

def hello_world(self):

self.client.get("/hello")

self.client.get("/world")

@task(3)

def view_items(self):

for item_id in range(10):

self.client.get(f"/item?id={item_id}", name="/item")

time.sleep(1)

def on_start(self):

self.client.post("/login", json={"username":"foo", "password":"bar"})

- Configure the MCP server using the below specs in your favorite MCP client (Claude Desktop, Cursor, Windsurf and more):

{

"mcpServers": {

"locust": {

"command": "/Users/naveenkumar/.local/bin/uv",

"args": [

"--directory",

"/Users/naveenkumar/Gits/locust-mcp-server",

"run",

"locust_server.py"

]

}

}

}

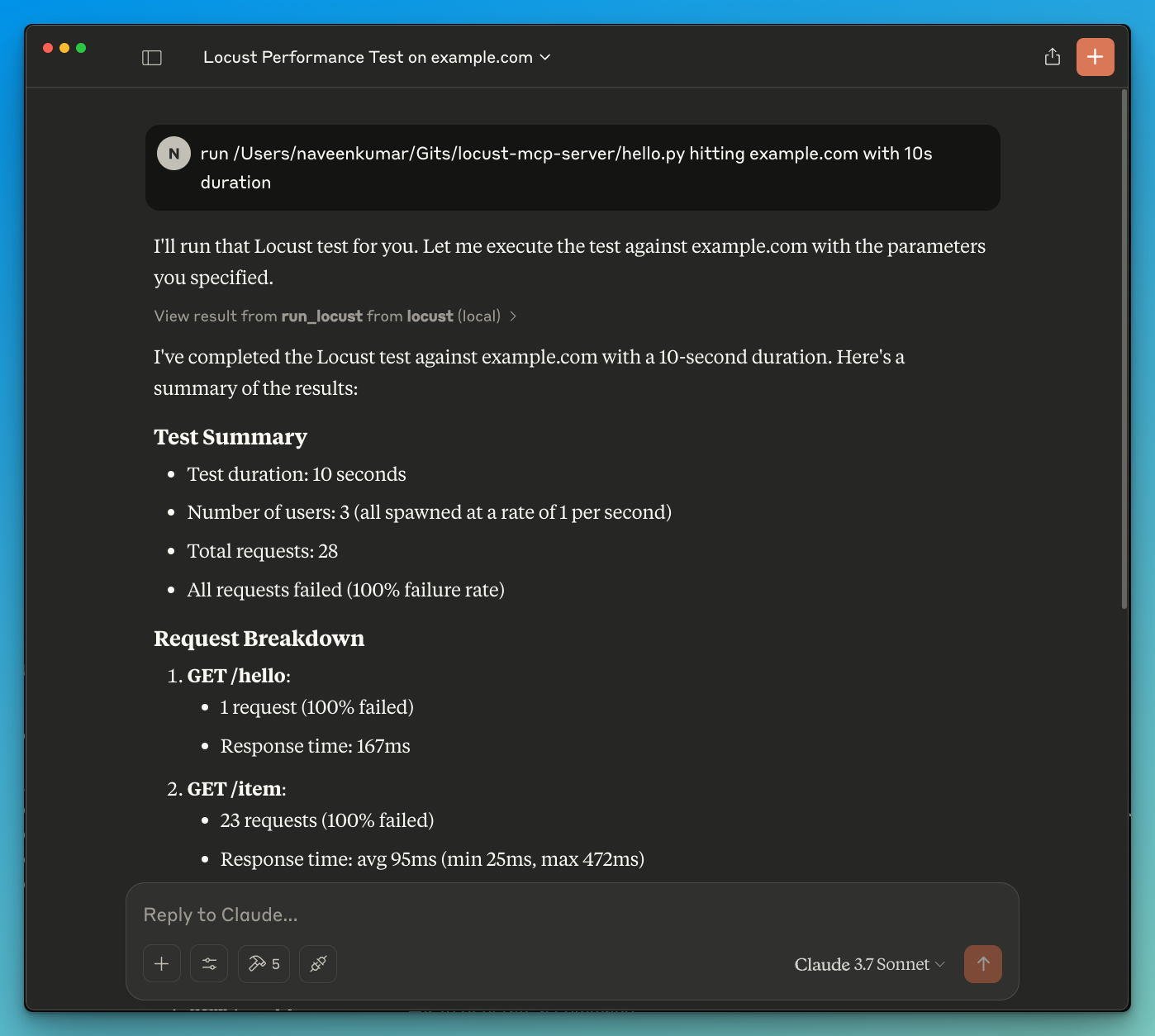

- Now ask the LLM to run the test e.g.

run locust test for hello.py. The Locust MCP server will use the following tool to start the test:

run_locust: Run a test with configurable options for headless mode, host, runtime, users, and spawn rate

📝 API Reference

Run Locust Test

run_locust(

test_file: str,

headless: bool = True,

host: str = "http://localhost:8089",

runtime: str = "10s",

users: int = 3,

spawn_rate: int = 1

)

Parameters:

test_file: Path to your Locust test scriptheadless: Run in headless mode (True) or with UI (False)host: Target host to load testruntime: Test duration (e.g., "30s", "1m", "5m")users: Number of concurrent users to simulatespawn_rate: Rate at which users are spawned

✨ Use Cases

- LLM powered results analysis

- Effective debugging with the help of LLM

🤝 Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

📄 License

This project is licensed under the MIT License - see the LICENSE file for details.

相关服务器

Alpha Vantage MCP Server

赞助Access financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

Domain Checker

Check domain name availability using WHOIS lookups and DNS resolution.

Pharo NeoConsole

Evaluate Pharo Smalltalk expressions and get system information via a local NeoConsole server.

iOS Simulator MCP Server

A Model Context Protocol (MCP) server for interacting with iOS simulators. This server allows you to interact with iOS simulators by getting information about them, controlling UI interactions, and inspecting UI elements.

Python Interpreter MCP

An MCP server that provides Python code execution capabilities through a REST API interface.

Ludus

A Model Context Protocol (MCP) server for automating Ludus v1 and v2 cyber range environments through AI assistants. 190+ tools for range management, blueprints, groups, templates, scenarios, and SIEM integration.

OpenGrok

OpenGrok MCP Server is a native Model Context Protocol (MCP) VS Code extension that seamlessly bridges the gap between your organization's OpenGrok indices and GitHub Copilot Chat. It arms your AI assistant with the deep, instantaneous repository context required to traverse, understand, and search massive codebases using only natural language.

memtrace

Memtrace gives AI coding agents structural memory — your codebase as a live knowledge graph so agents stop re-deriving code structure from scratch and start reasoning from fact.

Jinni

A tool to provide Large Language Models with project context by intelligently filtering and concatenating relevant files.

@4da/mcp-server

Dependency intelligence for AI agents. CVE scanning, health checks, upgrade planning.

GrowthBook

Create and read feature flags, review experiments, generate flag types, search docs, and interact with GrowthBook's feature flagging and experimentation platform.