Deepwiki

Fetches content from deepwiki.com and converts it into LLM-readable markdown.

Deepwiki MCP Server

⚠️ IMPORTANT NOTICE: This server is currently not working since DeepWiki has cut off the possibility to scrape it. We recommend using the official DeepWiki MCP server at https://docs.devin.ai/work-with-devin/deepwiki-mcp for the time being.

This is an unofficial Deepwiki MCP Server

It takes a Deepwiki URL via MCP, crawls all relevant pages, converts them to Markdown, and returns either one document or a list by page.

Features

- 🔒 Domain Safety: Only processes URLs from deepwiki.com

- 🧹 HTML Sanitization: Strips headers, footers, navigation, scripts, and ads

- 🔗 Link Rewriting: Adjusts links to work in Markdown

- 📄 Multiple Output Formats: Get one document or structured pages

- 🚀 Performance: Fast crawling with adjustable concurrency and depth

- NLP: It's to search just for the library name

Usage

Prompts you can use:

deepwiki fetch how can i use gpt-image-1 with "vercel ai" sdk

deepwiki fetch how can i create new blocks in shadcn?

deepwiki fetch i want to understand how X works

Fetch complete Documentation (Default)

use deepwiki https://deepwiki.com/shadcn-ui/ui

use deepwiki multiple pages https://deepwiki.com/shadcn-ui/ui

Single Page

use deepwiki fetch single page https://deepwiki.com/tailwindlabs/tailwindcss/2.2-theme-system

Get by shortform

use deepwiki fetch tailwindlabs/tailwindcss

deepwiki fetch library

deepwiki fetch url

deepwiki fetch <name>/<repo>

deepwiki multiple pages ...

deepwiki single page url ...

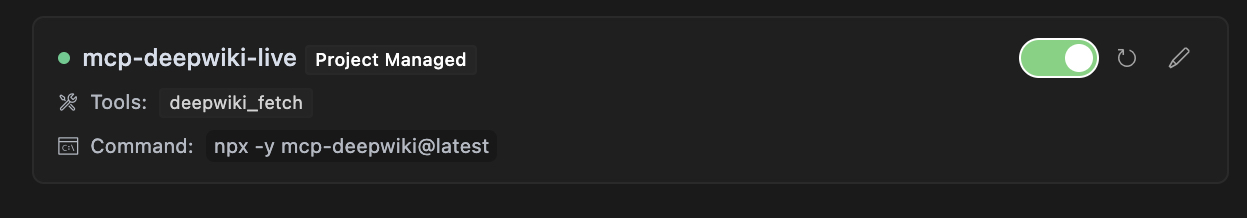

Cursor

Add this to .cursor/mcp.json file.

{

"mcpServers": {

"mcp-deepwiki": {

"command": "npx",

"args": ["-y", "mcp-deepwiki@latest"]

}

}

}

MCP Tool Integration

The package registers a tool named deepwiki_fetch that you can use with any MCP-compatible client:

{

"action": "deepwiki_fetch",

"params": {

"url": "https://deepwiki.com/user/repo",

"mode": "aggregate",

"maxDepth": "1"

}

}

Parameters

url(required): The starting URL of the Deepwiki repositorymode(optional): Output mode, either "aggregate" for a single Markdown document (default) or "pages" for structured page datamaxDepth(optional): Maximum depth of pages to crawl (default: 10)

Response Format

Success Response (Aggregate Mode)

{

"status": "ok",

"data": "# Page Title\n\nPage content...\n\n---\n\n# Another Page\n\nMore content...",

"totalPages": 5,

"totalBytes": 25000,

"elapsedMs": 1200

}

Success Response (Pages Mode)

{

"status": "ok",

"data": [

{

"path": "index",

"markdown": "# Home Page\n\nWelcome to the repository."

},

{

"path": "section/page1",

"markdown": "# First Page\n\nThis is the first page content."

}

],

"totalPages": 2,

"totalBytes": 12000,

"elapsedMs": 800

}

Error Response

{

"status": "error",

"code": "DOMAIN_NOT_ALLOWED",

"message": "Only deepwiki.com domains are allowed"

}

Partial Success Response

{

"status": "partial",

"data": "# Page Title\n\nPage content...",

"errors": [

{

"url": "https://deepwiki.com/user/repo/page2",

"reason": "HTTP error: 404"

}

],

"totalPages": 1,

"totalBytes": 5000,

"elapsedMs": 950

}

Progress Events

When using the tool, you'll receive progress events during crawling:

Fetched https://deepwiki.com/user/repo: 12500 bytes in 450ms (status: 200)

Fetched https://deepwiki.com/user/repo/page1: 8750 bytes in 320ms (status: 200)

Fetched https://deepwiki.com/user/repo/page2: 6200 bytes in 280ms (status: 200)

Local Development - Installation

Local Usage

{

"mcpServers": {

"mcp-deepwiki": {

"command": "node",

"args": ["./bin/cli.mjs"]

}

}

}

From Source

# Clone the repository

git clone https://github.com/regenrek/deepwiki-mcp.git

cd deepwiki-mcp

# Install dependencies

npm install

# Build the package

npm run build

Direct API Calls

For HTTP transport, you can make direct API calls:

curl -X POST http://localhost:3000/mcp \

-H "Content-Type: application/json" \

-d '{

"id": "req-1",

"action": "deepwiki_fetch",

"params": {

"url": "https://deepwiki.com/user/repo",

"mode": "aggregate"

}

}'

Configuration

Environment Variables

DEEPWIKI_MAX_CONCURRENCY: Maximum concurrent requests (default: 5)DEEPWIKI_REQUEST_TIMEOUT: Request timeout in milliseconds (default: 30000)DEEPWIKI_MAX_RETRIES: Maximum retry attempts for failed requests (default: 3)DEEPWIKI_RETRY_DELAY: Base delay for retry backoff in milliseconds (default: 250)

To configure these, create a .env file in the project root:

DEEPWIKI_MAX_CONCURRENCY=10

DEEPWIKI_REQUEST_TIMEOUT=60000

DEEPWIKI_MAX_RETRIES=5

DEEPWIKI_RETRY_DELAY=500

Docker Deployment (Untested)

Build and run the Docker image:

# Build the image

docker build -t mcp-deepwiki .

# Run with stdio transport (for development)

docker run -it --rm mcp-deepwiki

# Run with HTTP transport (for production)

docker run -d -p 3000:3000 mcp-deepwiki --http --port 3000

# Run with environment variables

docker run -d -p 3000:3000 \

-e DEEPWIKI_MAX_CONCURRENCY=10 \

-e DEEPWIKI_REQUEST_TIMEOUT=60000 \

mcp-deepwiki --http --port 3000

Development

# Install dependencies

pnpm install

# Run in development mode with stdio

pnpm run dev-stdio

# Run tests

pnpm test

# Run linter

pnpm run lint

# Build the package

pnpm run build

Troubleshooting

Common Issues

-

Permission Denied: If you get EACCES errors when running the CLI, make sure to make the binary executable:

chmod +x ./node_modules/.bin/mcp-deepwiki -

Connection Refused: Make sure the port is available and not blocked by a firewall:

# Check if port is in use lsof -i :3000 -

Timeout Errors: For large repositories, consider increasing the timeout and concurrency:

DEEPWIKI_REQUEST_TIMEOUT=60000 DEEPWIKI_MAX_CONCURRENCY=10 npx mcp-deepwiki

Contributing

We welcome contributions! Please see CONTRIBUTING.md for details.

License

MIT

Links

- X/Twitter: @kregenrek

- Bluesky: @kevinkern.dev

Courses

- Learn Cursor AI: Ultimate Cursor Course

- Learn to build software with AI: instructa.ai

See my other projects:

- AI Prompts - Curated AI Prompts for Cursor AI, Cline, Windsurf and Github Copilot

- codefetch - Turn code into Markdown for LLMs with one simple terminal command

- aidex A CLI tool that provides detailed information about AI language models, helping developers choose the right model for their needs.# tool-starter

相关服务器

Bright Data

赞助Discover, extract, and interact with the web - one interface powering automated access across the public internet.

MyBrowserAPI

A browser API for interacting with web services like X, Reddit, ChatGPT, and WhatsApp using Puppeteer.

Webclaw

Web content extraction for LLM pipelines — clean markdown or structured JSON from any URL using browser-grade TLS fingerprinting, no headless browser required. CLI, REST API, and MCP server.

LinkRescue

MCP server that exposes LinkRescue's broken link scanning, monitoring, and fix suggestion capabilities to AI agents (Claude, Cursor, etc.).

HotNews MCP Server

Provides real-time hot trending topics from major Chinese social platforms and news sites.

ScreenshotOne

Render website screenshots with ScreenshotOne

Github to MCP

Convert GitHub repositories to MCP servers automatically. Extract tools from OpenAPI, GraphQL & REST APIs for Claude Desktop, Cursor, Windsurf, Cline & VS Code. AI-powered code generation creates type-safe TypeScript/Python MCP servers. Zero config setup - just paste a repo URL. Built for AI assistants & LLM tool integration.

Plasmate MCP

Agent-native headless browser that converts web pages to structured Semantic Object Model (SOM) JSON -- 4x fewer tokens than raw HTML with lower latency on Claude and GPT-4o.

Social APIS Hub

The unified API for social media data - built for developers and AI agents.

YouTube Insights MCP Server

Extract insights from YouTube videos, including subtitles, video discovery, and channel information.

DOMShell

Browse the web with filesystem commands. 38 MCP tools let AI agents ls, cd, grep, click, and type through Chrome via a Chrome Extension.