AI Intervention Agent

An MCP server for real-time user intervention in AI-assisted development workflows.

When using AI CLIs/IDEs, agents can drift from your intent. This project gives you a simple way to intervene at key moments, review context in a Web UI, and send your latest instructions via interactive_feedback so the agent can continue on track.

Works with Cursor, VS Code, Claude Code, Augment, Windsurf, Trae, and more.

Quick start

Option 1: Using uvx (Recommended)

Configure your AI tool to launch the MCP server directly via uvx (this automatically installs and runs the latest version):

{

"mcpServers": {

"ai-intervention-agent": {

"command": "uvx",

"args": ["ai-intervention-agent"],

"timeout": 600,

"autoApprove": ["interactive_feedback"]

}

}

}

Option 2: Using pip

- First, install the package manually (please remember to manually

pip install --upgrade ai-intervention-agentperiodically to get updates):

pip install ai-intervention-agent

- Configure your AI tool to launch the installed MCP server:

{

"mcpServers": {

"ai-intervention-agent": {

"command": "ai-intervention-agent",

"args": [],

"timeout": 600,

"autoApprove": ["interactive_feedback"]

}

}

}

[!NOTE]

interactive_feedbackis a long-running tool. Some clients have a hard request timeout, so the Web UI provides a countdown + auto re-submit option to keep sessions alive.

- Default:

feedback.frontend_countdown=240seconds- Max:

250seconds (to stay under common 300s hard timeouts)

- (Optional) Customize your config:

- On first run,

config.tomlwill be created under your OS user config directory (see docs/configuration.md). - Example:

[web_ui]

port = 8080

[feedback]

frontend_countdown = 240

backend_max_wait = 600

Prompt snippet (copy/paste)

- Only ask me through the MCP `ai-intervention-agent` tool; do not ask directly in chat or ask for end-of-task confirmation in chat.

- If a tool call fails, keep asking again through `ai-intervention-agent` instead of making assumptions, until the tool call succeeds.

ai-intervention-agent usage details:

- If requirements are unclear, use `ai-intervention-agent` to ask for clarification with predefined options.

- If there are multiple approaches, use `ai-intervention-agent` to ask instead of deciding unilaterally.

- If a plan/strategy needs to change, use `ai-intervention-agent` to ask instead of deciding unilaterally.

- Before finishing a request, always ask for feedback via `ai-intervention-agent`.

- Do not end the conversation/request unless the user explicitly allows it via `ai-intervention-agent`.

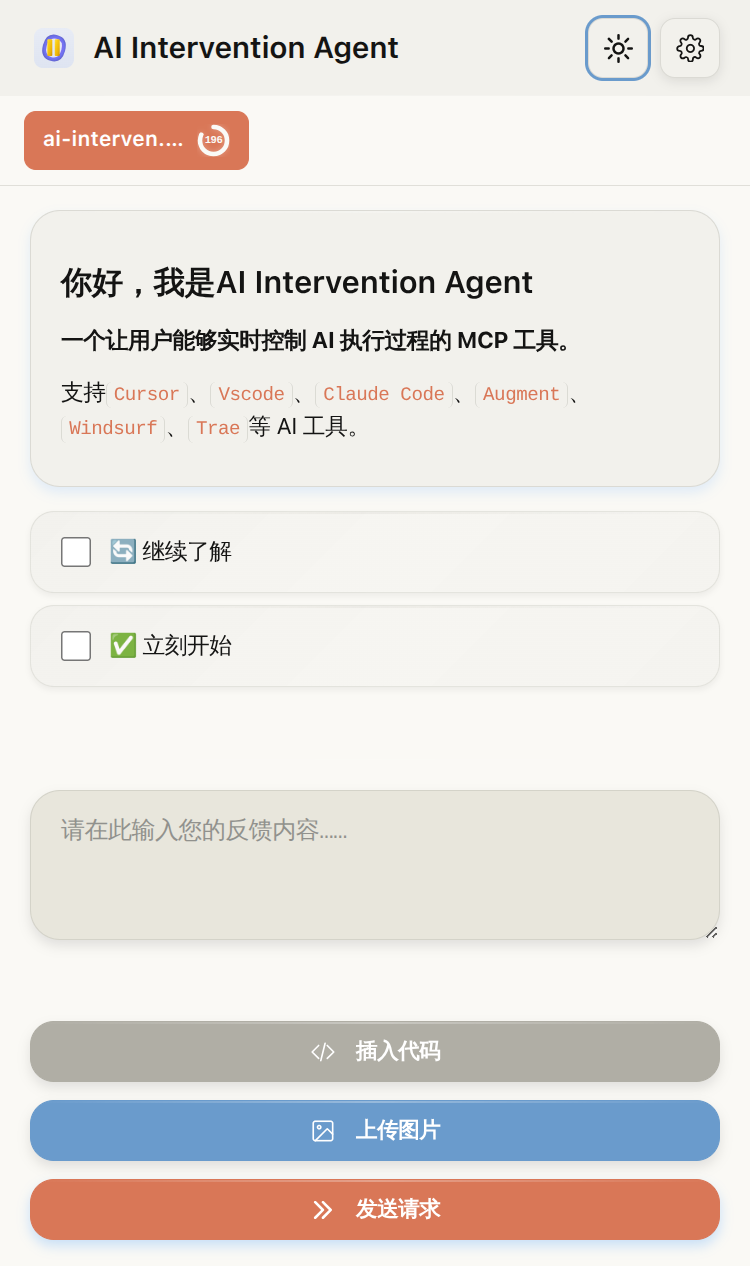

Screenshots

Feedback page (auto switches between dark/light)

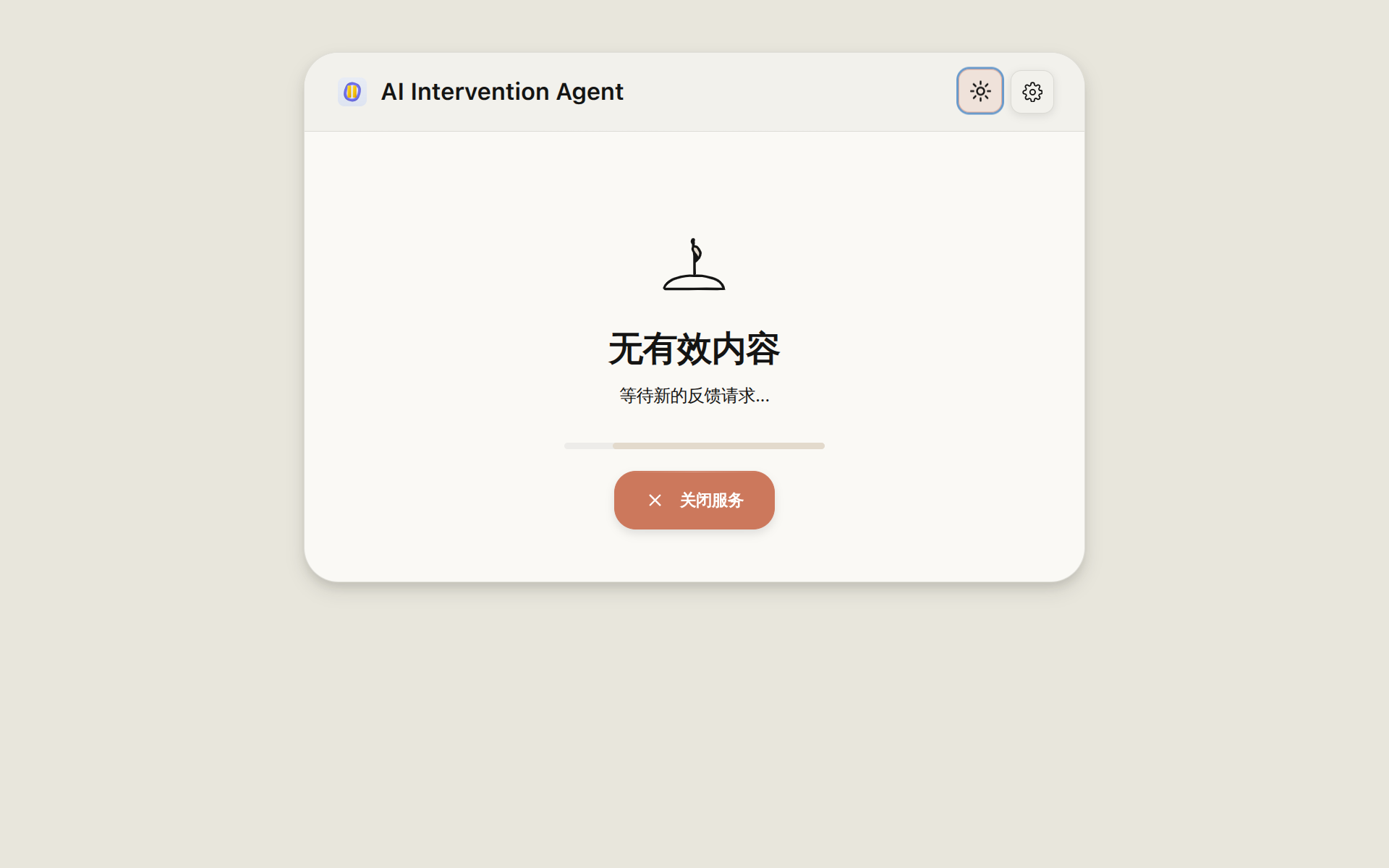

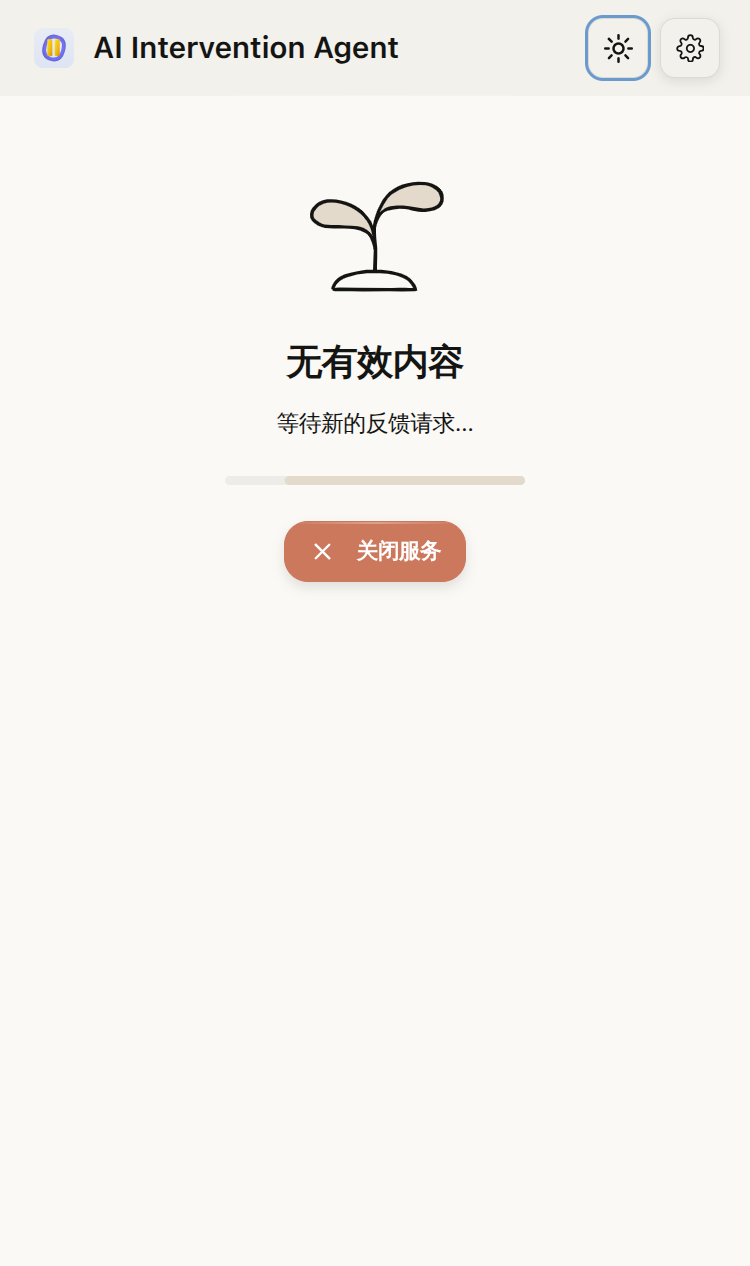

More screenshots (empty state + settings)

Empty state (auto switches between dark/light)

Settings (dark)

Key features

- Real-time intervention: the agent pauses and waits for your input via

interactive_feedback - Web UI: Markdown, code highlighting, and math rendering

- Multi-task: tab switching with independent countdown timers

- Auto re-submit: keep sessions alive by auto-submitting at timeout

- Notifications: web / sound / system / Bark

- SSH-friendly: great with port forwarding

How it works

- Your AI client calls the MCP tool

interactive_feedback. - The MCP server ensures the Web UI process is running, then creates a task via HTTP (

POST /api/tasks). - The browser (or VS Code Webview) renders tasks by polling the Web UI API.

- When you submit feedback, the Web UI completes the task in the task queue.

- The MCP server polls for completion (

GET /api/tasks/{task_id}) and returns your feedback (text + images) back to the AI client. - Optionally, the MCP server triggers notifications (Bark / system / sound / web hints) based on your config.

VS Code extension (optional)

| Item | Value |

|---|---|

| Purpose | Embed the interaction panel into VS Code’s sidebar to avoid switching to a browser. |

| Install (Open VSX) | Open VSX |

| Download VSIX (GitHub Release) | GitHub Releases |

| Setting | ai-intervention-agent.serverUrl (should match your Web UI URL, e.g. http://localhost:8080; you can change web_ui.port in config.toml.default) |

| Other settings | ai-intervention-agent.logLevel (Output → AI Intervention Agent)ai-intervention-agent.enableAppleScript (macOS only; for the “Run AppleScript” command; default: false. macOS native notifications are controlled separately and are enabled by default.) |

Configuration

| Item | Value |

|---|---|

| Docs (English) | docs/configuration.md |

| Docs (简体中文) | docs/configuration.zh-CN.md |

| Default template | config.toml.default (on first run it will be copied to config.toml) |

| OS | User config directory |

|---|---|

| Linux | ~/.config/ai-intervention-agent/ |

| macOS | ~/Library/Application Support/ai-intervention-agent/ |

| Windows | %APPDATA%/ai-intervention-agent/ |

Architecture

flowchart TD

subgraph CLIENTS["AI clients"]

AI_CLIENT["AI CLI / IDE<br/>(Cursor, VS Code, Claude Code, ...)"]

end

subgraph MCP_PROC["MCP server process (Python)"]

MCP_SRV["ai-intervention-agent<br/>(server.py / FastMCP)"]

MCP_TOOL["MCP tool<br/>interactive_feedback"]

SVC_MGR["Service manager<br/>(ServiceManager)"]

CFG_MGR_MCP["Config manager<br/>(config_manager.py)"]

NOTIF_MGR["Notification manager<br/>(notification_manager.py)"]

NOTIF_PROVIDERS["Providers<br/>(notification_providers.py)"]

MCP_SRV --> MCP_TOOL

MCP_SRV --> CFG_MGR_MCP

MCP_SRV --> NOTIF_MGR

NOTIF_MGR --> NOTIF_PROVIDERS

end

subgraph WEB_PROC["Web UI process (Python / Flask)"]

WEB_SRV["Web UI service<br/>(web_ui.py / Flask)"]

WEB_CFG_MGR["Config manager<br/>(config_manager.py)"]

HTTP_API["HTTP API<br/>(/api/*)"]

TASK_Q["Task queue<br/>(task_queue.py)"]

WEB_FRONTEND["Browser frontend<br/>(static/js/app.js + multi_task.js)"]

WEB_SRV --> HTTP_API

WEB_SRV --> TASK_Q

WEB_SRV --> WEB_CFG_MGR

WEB_FRONTEND <-->|poll /api/tasks| HTTP_API

WEB_FRONTEND -->|submit feedback| HTTP_API

end

subgraph VSCODE_PROC["VS Code extension (Node)"]

VSCODE_EXT["Extension host<br/>(packages/vscode/extension.js)"]

VSCODE_WEBVIEW["Webview frontend<br/>(webview.js + webview-ui.js<br/>+ webview-notify-core.js + webview-settings-ui.js)"]

VSCODE_EXT --> VSCODE_WEBVIEW

VSCODE_WEBVIEW <-->|poll /api/tasks| HTTP_API

VSCODE_WEBVIEW -->|submit feedback| HTTP_API

end

subgraph USER_UI["User interfaces"]

BROWSER["Browser<br/>(desktop/mobile)"]

VSCODE["VS Code<br/>(sidebar panel)"]

USER["User"]

end

CFG_FILE["config.toml<br/>(user config directory)"]

AI_CLIENT -->|MCP call| MCP_TOOL

MCP_TOOL -->|start/check Web UI| SVC_MGR

SVC_MGR -->|spawn/monitor| WEB_SRV

USER -->|input / click| WEB_FRONTEND

USER -->|input / click| VSCODE_WEBVIEW

BROWSER -->|load UI| WEB_FRONTEND

VSCODE -->|render UI| VSCODE_WEBVIEW

MCP_TOOL -->|"HTTP POST /api/tasks"| HTTP_API

MCP_TOOL -->|"HTTP GET /api/tasks/{task_id}"| HTTP_API

WEB_CFG_MGR <-->|read/write + watcher| CFG_FILE

CFG_MGR_MCP <-->|read/write + watcher| CFG_FILE

MCP_TOOL -->|trigger notifications| NOTIF_MGR

NOTIF_PROVIDERS -->|system / sound / Bark / web hints| USER

Documentation

- API docs index:

docs/api/index.md - API docs (简体中文):

docs/api.zh-CN/index.md - MCP tool reference:

docs/mcp_tools.md - MCP 工具说明:

docs/mcp_tools.zh-CN.md - i18n contributor guide:

docs/i18n.md - DeepWiki: deepwiki.com/xiadengma/ai-intervention-agent

Related projects

License

MIT License

İlgili Sunucular

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

LangSmith MCP Server

An MCP server for fetching conversation history and prompts from the LangSmith observability platform.

Authless Remote MCP Server

A remote MCP server deployable on Cloudflare Workers that does not require authentication. The server can be customized by defining tools.

Lustre MCP

Premium Flutter UI components for AI coding agents — 46 widgets, 3 themes, design tokens that make Claude Code and Cursor produce beautiful Flutter apps instead of generic Material defaults.

OPNSense MCP Server

Manage OPNsense firewalls using Infrastructure as Code (IaC) principles.

Safe Local Python Executor

A tool for safely executing local Python code without requiring external data files.

Adobe After Effects

Control Adobe After Effects through a standardized protocol, enabling AI assistants and other applications.

Smithery Reference Servers

A collection of reference implementations for Model Context Protocol (MCP) servers in Typescript and Python, demonstrating MCP features and SDK usage.

Swagger MCP Server

An example MCP server for deployment on Cloudflare Workers without authentication.

ShaderToy-MCP

Query and interact with ShaderToy shaders using large language models.

WhichModel

Cost-optimised LLM model routing for autonomous agents