Kai

Kai provides a bridge between large language models (LLMs) and your Kubernetes clusters, enabling natural language interaction with Kubernetes resources. The server exposes a comprehensive set of tools for managing clusters, namespaces, pods, deployments, services, and other Kubernetes resources

Kai - Kubernetes MCP Server

A Model Context Protocol (MCP) server for managing Kubernetes clusters through LLM clients like Claude and Ollama.

Overview

Kai provides a bridge between large language models (LLMs) and your Kubernetes clusters, enabling natural language interaction with Kubernetes resources. The server exposes a comprehensive set of tools for managing clusters, namespaces, pods, deployments, services, and other Kubernetes resources.

Features

Core Workloads

- Pods - Create, list, get, delete, and stream logs

- Deployments - Create, list, describe, and update

- Jobs - Batch workload management (create, get, list, delete)

- CronJobs - Scheduled batch workloads (create, get, list, delete)

Networking

- Services - Create, get, list, and delete

- Ingress - HTTP/HTTPS routing, TLS configuration (create, get, list, update, delete)

Configuration

- ConfigMaps - Configuration management (create, get, list, update, delete)

- Secrets - Secret management (create, get, list, update, delete)

- Namespaces - Namespace management (create, get, list, delete)

Cluster Operations

- Context Management - Switch contexts, list contexts, rename, delete

- Nodes - Node monitoring, cordoning, and draining

- Cluster Health - Cluster status and resource metrics

Storage

- Persistent Volumes - PV and PVC management

- Storage Classes - Storage class operations

Security

- RBAC - Roles, RoleBindings, and ServiceAccounts

Utilities

- Port Forwarding - Forward ports to pods and services (start, stop, list sessions)

Advanced

- Custom Resources - CRD and custom resource operations

- Events - Event streaming and filtering

- API Discovery - API resource exploration

Requirements

The server connects to your current kubectl context by default. Ensure you have access to a Kubernetes cluster configured for kubectl (e.g., minikube, Rancher Desktop, kind, EKS, GKE, AKS).

Installation

go install github.com/basebandit/kai/cmd/kai@latest

CLI Options

kai [options]

Options:

-kubeconfig string Path to kubeconfig file (default "~/.kube/config")

-context string Name for the loaded context (default "local")

-transport string Transport mode: stdio (default) or sse

-sse-addr string Address for SSE server (default ":8080")

-log-format string Log format: json (default) or text

-log-level string Log level: debug, info, warn, error (default "info")

-version Show version information

Logs are written to stderr in structured JSON format by default, making them easy to parse:

{"time":"2024-01-15T10:30:00Z","level":"INFO","msg":"kubeconfig loaded","path":"/home/user/.kube/config","context":"local"}

{"time":"2024-01-15T10:30:00Z","level":"INFO","msg":"starting server","transport":"stdio"}

Configuration

Claude Desktop

Edit your Claude Desktop configuration:

# macOS

code ~/Library/Application\ Support/Claude/claude_desktop_config.json

# Linux

code ~/.config/Claude/claude_desktop_config.json

Add the server configuration:

{

"mcpServers": {

"kubernetes": {

"command": "/path/to/kai"

}

}

}

With custom kubeconfig:

{

"mcpServers": {

"kubernetes": {

"command": "/path/to/kai",

"args": ["-kubeconfig", "/path/to/custom/kubeconfig"]

}

}

}

Cursor

Add to your Cursor MCP settings:

{

"mcpServers": {

"kubernetes": {

"command": "/path/to/kai"

}

}

}

Continue

Add to your Continue configuration (~/.continue/config.json):

{

"experimental": {

"modelContextProtocolServers": [

{

"transport": {

"type": "stdio",

"command": "/path/to/kai"

}

}

]

}

}

SSE Mode (Web Clients)

For web-based clients or custom integrations, run in SSE mode:

kai -transport=sse -sse-addr=:8080

Then connect to http://localhost:8080/sse.

Custom Kubeconfig

By default, Kai uses ~/.kube/config. You can specify a different kubeconfig:

kai -kubeconfig=/path/to/custom/kubeconfig -context=my-cluster

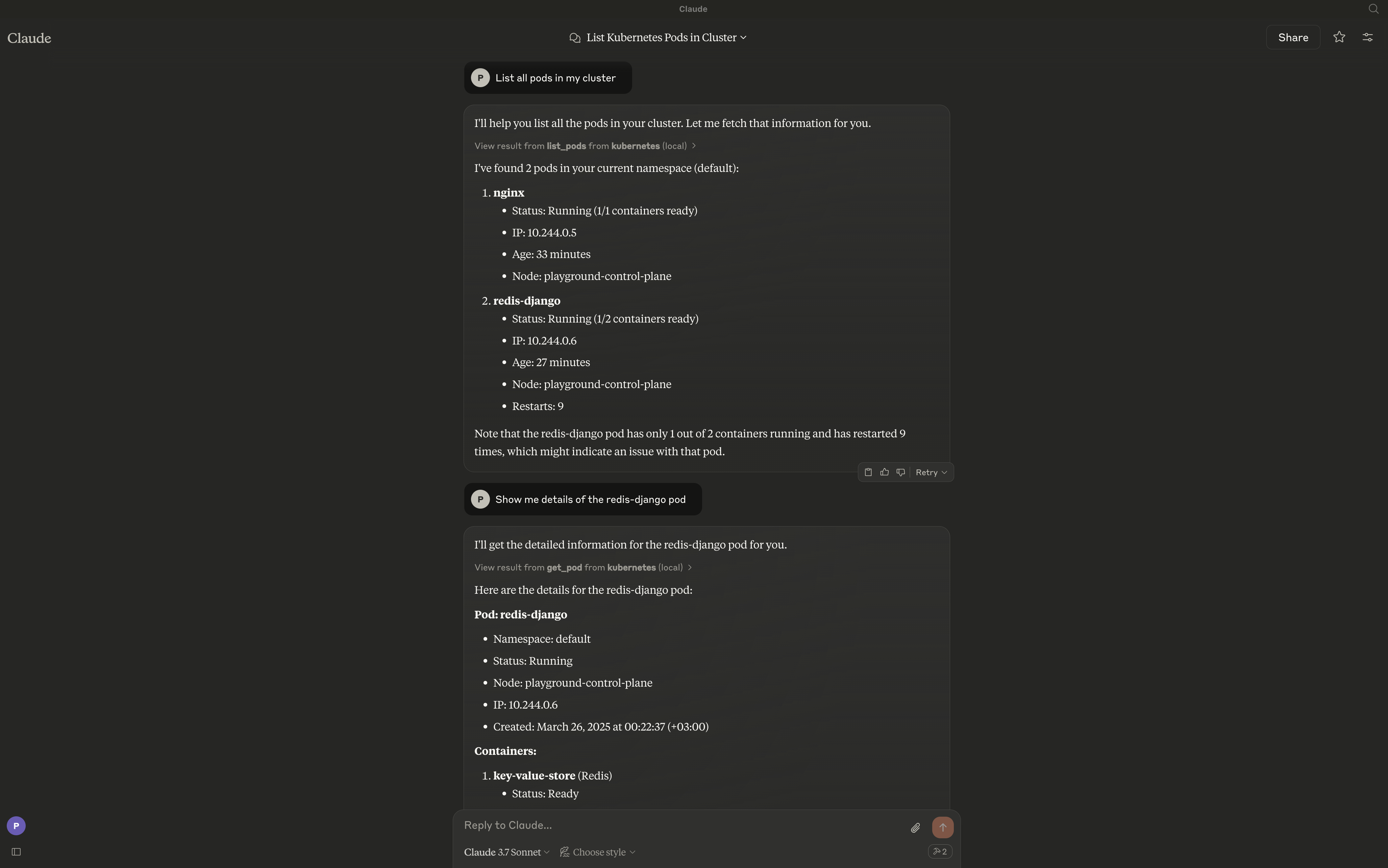

Usage Examples

Once configured, you can interact with your cluster using natural language:

- "List all pods in the default namespace"

- "Create a deployment named nginx with 3 replicas using the nginx:latest image"

- "Show me the logs for pod my-app"

- "Delete the service named backend"

- "Create a cronjob that runs every 5 minutes"

- "Create an ingress for my-app with TLS enabled"

- "Port forward service nginx on port 8080:80"

Contributing

Contributions are welcome! Please see our contributing guidelines for more information.

License

This project is licensed under the MIT License.

Related Servers

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

AKF — The AI Native File Format

EXIF for AI. AKF embeds trust scores, source provenance, and compliance metadata into every file your AI touches — DOCX, PDF, images, code, and 20+ formats. 9 MCP tools: stamp, inspect, trust, audit, scan, embed, extract, detect. Audit against EU AI Act, SOX, HIPAA, NIST in one command.

MCP Reticle

Reticle intercepts, visualizes, and profiles JSON-RPC traffic between your LLM and MCP servers in real-time, with zero latency overhead. Stop debugging blind. Start seeing everything.

MLflow MCP Server

Integrates with MLflow, enabling AI assistants to interact with experiments, runs, and registered models.

Netmind Code Interpreter

Execute code using the Netmind API.

MCP Code Crosscheck

A server for bias-resistant AI code review using cross-model evaluation.

MasterMCP

A demonstration tool showcasing potential security attack vectors against the Model Control Protocol (MCP).

Authless Remote MCP Server

An example of a remote MCP server without authentication, deployable on Cloudflare Workers or runnable locally.

Orbis API Marketplace

Autonomous API discovery and subscription — agents browse APIs, get live keys, and make calls with no human involvement.

Vigil

Cognitive infrastructure for AI agents — awareness daemon, frame-based tool filtering, signal protocol, session handoff, and event triggers.

Zip1

A free URL shortener