Project Atlantis

A Python MCP host server that allows for dynamic installation of functions and third-party MCP tools.

Project Atlantis

Meow! Ideally, you may want to create an account first at www.projectatlantis.ai and then have the bot walk you through setup (assuming everything works okay)

Basically we have a distributed linux-style system that provides tool infra for bots. Tools are arranged in folders for easy management across functions and teams. Teams can call each other's functions directly or of course the bots can just do things themselves. Under the covers is an MCP-compliant system but we support hotloading etc. without some of the clunky overhead of constantly updating MCP tools.

To get started, clone the repo, do the Python env stuff, set up your API keys as environment variables (OPENROUTER_API_KEY, ANTHROPIC_API_KEY, etc.) and connect this local Python server to the main server (see runServer). We give you all the source code to build your own tool-calling chatbot just like Claude or whatever. See Bot/Kitty/ for working examples using OpenRouter and Anthropic APIs — the bot discovers tools dynamically via search/dir rather than pre-loading them.

*note that Home/game.py is run whenever a new chat is created and will set the default chat tool

Project Atlantis Network

Each MCP server is part of a collaborative network of AI agents and developers. Using the Model Context Protocol, the platform creates an ecosystem where agents can discover and use each other's capabilities across the network. Tools and functions can be shared, discovered, and coordinated between agents—whether for robot-driven frontier development, automation tasks, or any other application. The network architecture enables agents to find and leverage tools from other users, creating a decentralized ecosystem of shared capabilities.

The centerpiece of this project is a Python MCP host (referred to as a 'remote') that lets you install functions and 3rd party MCP tools on the fly

Quick Start

-

Prerequisites - need to install Python for the server and Node for Lobster (the MCP client); you should also install uv/uvx and node/npx since it seems that MCP needs both

-

Python 3.13 seems to be most stable right now because of async support

-

Set up your Python virtual environment and install dependencies:

cd python-server

python3 -m venv venv

source venv/bin/activate

pip install -r requirements.txt

- Edit the runServer script in the

python-serverfolder and set the email and service name (it's actually best practice to create a copy "runServerFoo" that you can replace the runServer file with when we do updates):

python server.py \

[email protected] \ # email you use for project atlantis

--api-key=foobar \ # should change online

--host=localhost \ # npx MCP will be looking here to connect to remote (assumes there is at least one running locally)

--port=8000 \

--cloud-host=wss://projectatlantis.ai \ # points to cloud

--cloud-port=443 \

--service-name=home # remote name, can be anything but must be unique across all machines

- The MCP client is now called Lobster. To connect it to Claude Code:

claude mcp add atlantis -- npx atlantis-mcp --port 8000

To connect to Codex:

codex mcp add atlantis -- npx atlantis-mcp --port 8000

The default local MCP port is 8000. If the client reports handshake errors, first check that the Python server and the MCP client are using the same port.

To add Atlantis Open Weather for testing:

claude mcp add --transport stdio weather_forecast --env OPENWEATHER_API_KEY=mykey123 -- uvx --from atlantis-open-weather-mcp start-weather-server

-

To connect to Atlantis, sign into https://www.projectatlantis.ai under the same email

-

Your remote(s) should autoconnect using email and default api key = 'foobar' (see 'api' command to generate a new key later). The first server to connect will be assigned your 'default' unless you manually change it later

-

Initially the functions and servers folders will be empty except for some examples

-

You can run this standalone MCP or accessed from the cloud or both

Architecture

Caveat: MCP terminology is already terrible and calling things 'servers' or 'hosts' just makes it more confusing because MCP is inherently p2p

Pieces of the system:

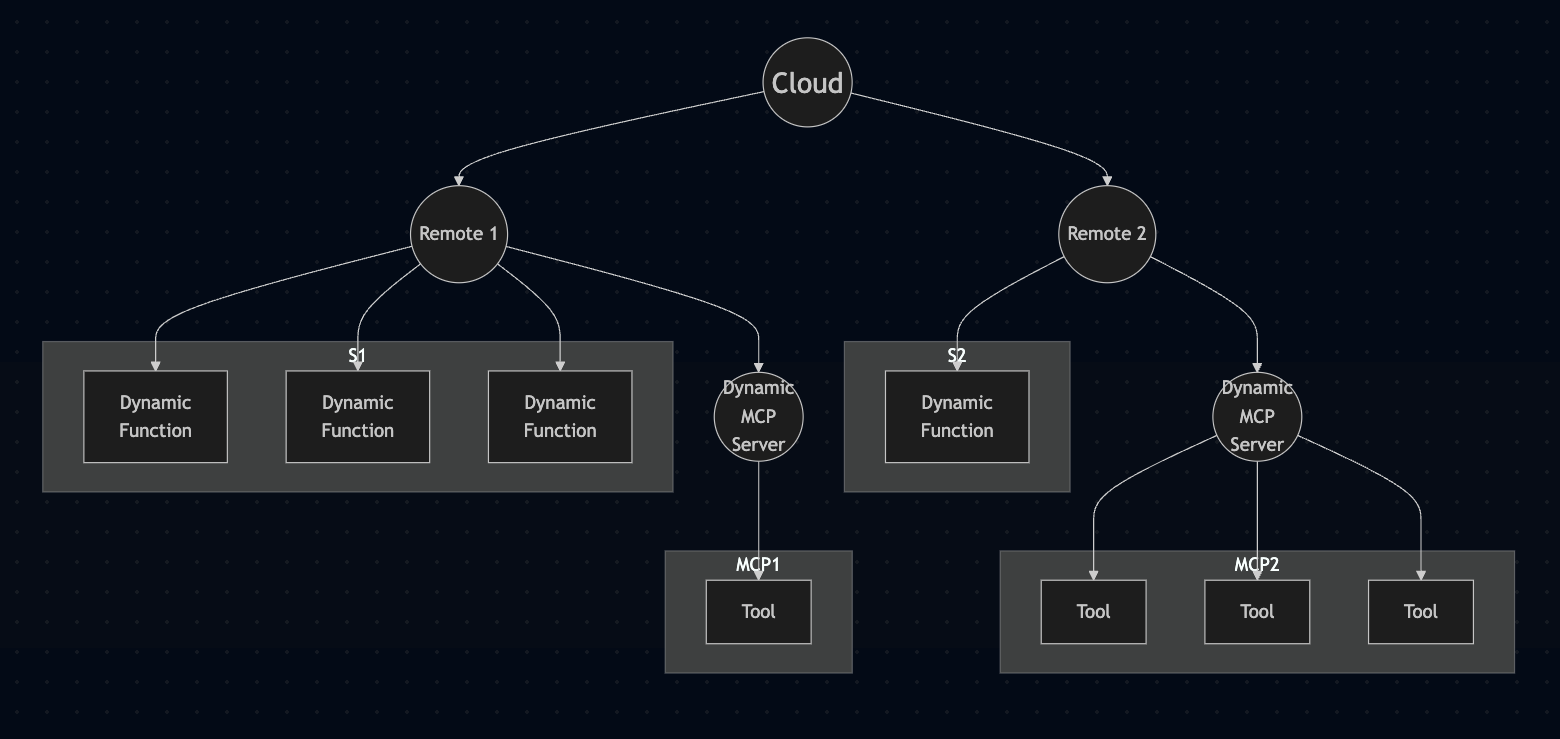

- Cloud: our experimental Atlantis cloud server; mostly a place to share tools and let users bang on them

- Remote: the Python server process found in this repo, officially referred to as an MCP 'host' (you can run >1 either on same box or on different one, just specify different service names)

- Dynamic Function: a simple Python function that you write, acts as a tool

- Dynamic MCP Server: any 3rd party MCP, stored as a JSON config file

Note that MCP auth and security are still being worked out so using the cloud for auth is easier right now

Directories

-

Python Remote (MCP P2P server) (

python-server/)- Location of our 'remote'. Runs locally but can be controlled remotely

-

Lobster (MCP Client) (

client/)- lets Claude Code or Codex run Atlantis commands or chat via MCP

- uses npx (easy to install into Claude Code or Codex)

- cloud connection not needed - although it may complain

- only supports a subset of the spec

- can only see tools on the local box (at least right now) or shared tools set to 'public'

Python Server Layout

If you are trying to understand the Python source, start in python-server/server.py and then branch out from there:

server.py- main entry point and protocol host. It starts the Starlette app, owns theDynamicAdditionServerclass, manages WebSocket and cloud Socket.IO connections, and wires together the function/server managers. If you are tracing a tool invocation, the consolidated MCPtools/callhandler lives here inDynamicAdditionServer._handle_tools_call(), which then delegates to_execute_tool().DynamicFunctionManager.py- owns the dynamic Python tool system underdynamic_functions/. This is where function decorators are defined (@visible,@public,@protected, etc.), files are scanned and validated, Python modules are loaded/reloaded, and tool calls are dispatched into user code.DynamicServerManager.py- manages third-party MCP server configs underdynamic_servers/. It saves/loads JSON configs, starts stdio MCP servers, keeps sessions alive, and fetches their tool lists.atlantis.py- the dynamic function harness/runtime API injected into dynamic functions. This is the bridge that tool code uses forclient_log, streaming, HTML/image/video responses, click/upload callbacks, request context, and persistent shared state. See the Dynamic Functions Documentation for the function-authoring side of this API.lobster.py- compatibility layer for the local Atlantis MCP client. It defines thereadme/command/chattools and translates those local calls into the cloud-backed command flow.state.py- central configuration and process-wide state. It sets up logging, definesFUNCTIONS_DIRandSERVERS_DIR, and stores base server constants like host/port and request timeout.utils.py- low-level helpers shared across the server and dynamic functions. It contains search-term parsing, JSON/log formatting, the global server-instance bridge, and client command/log plumbing used byatlantis.py.PIDManager.py- single-instance guard for the Python server process via PID files.ColoredFormatter.py- logging formatter and request-context filter used bystate.py.

The runtime split is basically:

server.pyreceives MCP traffic.server.pyroutes MCPtools/callthroughDynamicAdditionServer._handle_tools_call()._handle_tools_call()delegates Python tool execution toDynamicFunctionManager.pyand proxied MCP tool execution toDynamicServerManager.py.- Dynamic functions call back into the host through

atlantis.pyandutils.py.

For dynamic function authoring details, see Dynamic Functions Documentation. For wiring browser callbacks (button clicks, uploads) into Python functions, see Onclick Callbacks. For auth and trust boundaries, see Security Model.

Features

Dynamic Functions

Dynamic functions give users the ability to create and maintain custom functions-as-tools, which are kept in the dynamic_functions/ folder. Functions are loaded on start and automatically reloaded when modified.

For detailed information about creating and using dynamic functions, see the Dynamic Functions Documentation. For an example of wiring a UI button back into a Python callback, see Onclick Callbacks.

Dynamic MCP Servers

-

gives users the ability to install and manage third-party MCP server tools; JSON config files are kept in the

dynamic_servers/folder -

each MCP server will need to be 'started' first to fetch the list of tools

-

each server config follows the usual JSON structure that contains an 'mcpServers' element; for example, this installs an openweather MCP server:

{ "mcpServers": { "openweather": { "command": "uvx", "args": [ "--from", "atlantis-open-weather-mcp", "start-weather-server", "--api-key", "<your openweather api key>" ] } } }

The weather MCP service is just an existing one I ported to uvx. See here

Cloud

The cloud service at https://www.projectatlantis.ai provides a centralized hub for managing your remote servers and sharing tools across machines.

App Organization

Dynamic functions are organized into apps using folder structure. Simply place your .py files in subdirectories:

dynamic_functions/

├── Home/ # App: "Home"

│ └── kitty.py

├── Accounting/ # App: "Accounting"

│ ├── accounting.py

│ └── foo.py

└── FilmFromImage/ # App: "FilmFromImage"

└── qwen_image_edit_local.py

The folder name IS the app name. Functions in Home folder are assigned accordingly.

Nested Apps (Subfolders)

Create nested app structures using subfolders:

dynamic_functions/

└── MyApp/

└── SubModule/

└── Feature/

└── my_function.py

This creates the app name: MyApp/SubModule/Feature

Best Practices:

- Keep it simple - one level of folders is usually enough

- Use descriptive folder names (e.g.,

Chat,Admin,Tools) - Group related functions together in the same folder

- The folder structure keeps your code organized and clear

Tool Calling with Search Terms

When calling tools, you can use compound tool names to disambiguate functions. Only include as much of the path as needed to uniquely identify the function.

Format: remote_owner*remote_name*app*location*function

Key Principle: Use the simplest form that resolves uniquely

# If you have these functions:

# - dynamic_functions/Chat/send_message.py

# - dynamic_functions/Email/send_message.py

# - dynamic_functions/SMS/send_message.py

send_message ❌ Ambiguous! Which one?

**Chat**send_message ✅ Clear! The one in Chat

**Email**send_message ✅ Clear! The one in Email

Examples:

update_image → Simple call (only works if unique)

**MyApp**update_image → Specify app to disambiguate

**MyApp/SubModule**process_data → Nested app path

alice*prod*Admin**restart → Full routing: owner + remote + app + function

***office*print → Just location context

How it works:

- Fields:

remote_owner*remote_name*app*location*function - Separate fields with

*(asterisk) - Omit fields you don't need (use empty strings:

**App**func) - The app field supports slash notation for nested apps (

MyApp/SubModule) - The last field is always the function name

- No asterisks = treat entire name as function name

When to use compound names:

- Name conflicts: Multiple apps have functions with the same name

- Remote targeting: Call functions on specific remotes from the cloud

- Location routing: Target functions at specific physical locations

- Multi-user setups: Specify owner and remote in shared environments

Best practice: Start simple (update_image) and add context only when needed to resolve ambiguity (**ImageTools**update_image).

Example:

# File: dynamic_functions/ImageTools/process.py

@visible

async def update_image(image_path: str):

"""Update an image."""

return "updated"

# If this is the ONLY update_image:

update_image ✅ Works fine!

# If Chat app ALSO has update_image:

**ImageTools**update_image ✅ Now we need to specify the app

Bot Runtime

Bot content lives under python-server/dynamic_functions/Bot/Content/, and player-specific interaction history is stored per user in python-server/dynamic_functions/Data/players/{username}/interactions.json.

Key files

python-server/dynamic_functions/Home/MULTIX.md— User-facing documentation for the Atlantis MCP tools (commands, search terms, tool prefixes, etc.). This is the file served by thereadmeMCP tool.python-server/dynamic_functions/Bot/Runtime/chat.py— Bot chat dispatch, system prompt assembly, and tool wiring.python-server/dynamic_functions/Data/main.py— Player folder data and bot interaction history helpers.

Troubleshooting

If MCP tools aren't working (e.g. returning Unknown tool errors), check the server log first. The Python server writes detailed logs to python-server/runServer.log — this file shows exactly what's happening with tool calls, cloud auth, and client connections. It can get large, so tail the last ~1000 lines:

tail -1000 python-server/runServer.log

Common issues visible in the log:

⚠️ Unexpected tool call from local client— the server received a tool call but didn't recognize it; check that your tools are registered❌ Authentication failed— cloud credentials are wrong or the account doesn't exist; check your email/api-key🏠 Local MCP tool call intercepted— confirms the server is receiving tool calls from the MCP client- MCP handshake errors usually mean the client is pointed at the wrong port. The default local MCP port is

8000; make sure the server--portand client--portmatch.

Visitor-related log lines include "Visitor:", "New conversation for", and "Injected time-gap message".

Our Greenland Terrain Server

The goal is to use this system as the main bot infrastructure (tool etc.) for our Greenland terrain server

Related Servers

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

MCP AI Agent Server

A server that bridges Cline to an AI agent system, enabling seamless interaction with AI agents through the Model Context Protocol.

MCP Config Generator

A web tool for safely adding MCP servers to your Claude Desktop configuration.

Kodus OSV

Open source vulnerability lookup via osv_query/osv_query_batch tools.

xctools

🍎 MCP server for Xcode's xctrace, xcrun, xcodebuild.

MCP LLaMA

An MCP server with weather tools and LLaMA integration.

SceneView MCP

22 tools for 3D and AR development — generates correct, compilable SceneView code for Android (Jetpack Compose) and iOS (SwiftUI). 858 tests.

Agent Evals by Galileo

Bring agent evaluations, observability, and synthetic test set generation directly into your IDE for free with Galileo's new MCP server

Build-Scout

Interact with various build systems including Gradle, Maven, NPM/Yarn, Cargo, Python, Makefile, and CMake.

xMCP Server

A streamable HTTP MCP server that proxies requests to stdio MCP servers within a container, providing a consistent command environment.

Kai

Kai provides a bridge between large language models (LLMs) and your Kubernetes clusters, enabling natural language interaction with Kubernetes resources. The server exposes a comprehensive set of tools for managing clusters, namespaces, pods, deployments, services, and other Kubernetes resources