agentic-store-mcp Server

Power up your AI agents with 31 production-ready tools. Features local-first Python analysis, real-time SearXNG search, and a secure local proxy to sanitize prompts. Built for developers who value performance and privacy. Install once, use everywhere.

Documentation

⚡ AgenticStore MCP Server: LLM Prompt Firewall, Token Optimization & AI Security Toolkit

Open-Source Model Context Protocol (MCP) Server for Data Privacy, Prompt Recording, Audit Logs, and 31 Agent Tools for Claude Code, Cursor, and Windsurf.

� Official Documentation • �🚀 Quick Start • 🛡️ Prompt Firewall • 🗂️ Full Tool Directory • 🌐 Web Search • 🔌 Client Setup • 💻 Claude Code • 🖥️ GUI Webapp

🔒 Why You Need This: Enterprise-Grade AI Security Meets Autonomous Agents

Giving AI assistants like Claude, Cursor, and Windsurf access to your codebase and the web is a superpower. But passing sensitive enterprise data to remote LLMs is a massive security risk. Furthermore, hitting token limits quickly degrades LLM context windows and increases costs.

The Problem: You want the massive productivity boost of agentic workflows, but you cannot compromise on Data Loss Prevention (DLP), compliance, leak prevention, or token bloat.

The Solution: AgenticStore MCP Server solves the entire equation natively:

- 🛡️ The LLM Prompt Firewall: A secure local proxy that intercepts, scans, and sanitizes your prompts before they leave your machine. It flags leaked secrets, PII, and API keys, using local models (like Ollama) to sanitize data and generate strict audit traces for all AI usage.

- 🧰 The MCP Toolkit (31 Tools): A production-ready arsenal. Instantly arm your AI with everything from code analyzers and CVE vulnerability scanners (OSV CVE scans), to context pruners, token optimizers, persistent semantic memory, and self-hosted SearXNG web search.

Zero subscriptions. Zero vendor lock-in. Configure your MCP tools manually or effortlessly through a beautiful local GUI.

🎥 Prompt Firewall Demo

Your browser does not support the video tag. Take a look at the Prompt Firewall Demo video here.(If the video above doesn't load, click here to watch the demo)

🎥 Watch the GUI Demo in Action (MCP Tools)

Your browser does not support the video tag. Take a look at the AgenticStore MCP GUI Demo video here.

(If the video above doesn't load, click here to watch the demo)

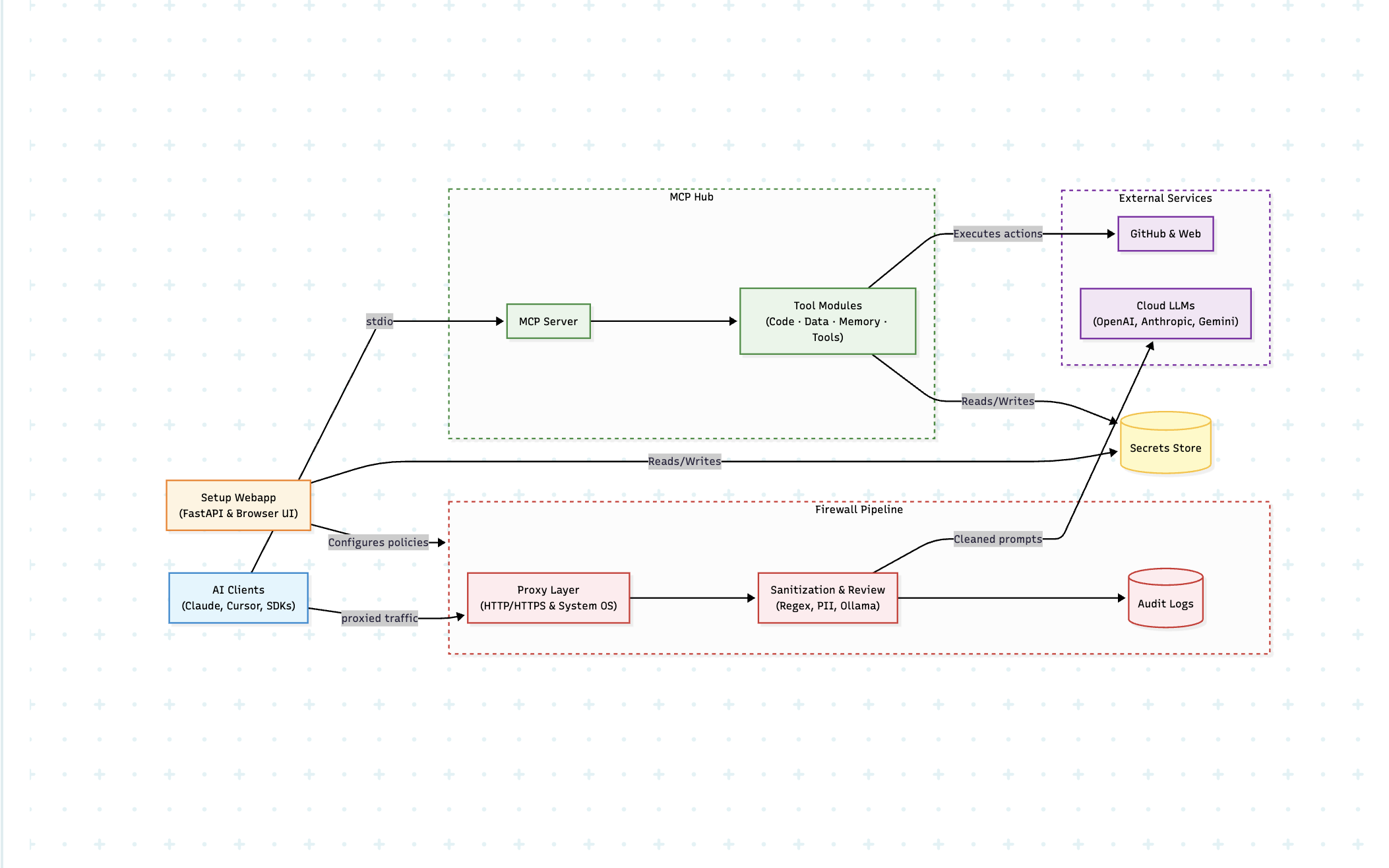

🏗️ How It Works

🔥 Why Choose AgenticStore MCP?

| Feature | AgenticStore MCP Server | Standard MCP Servers |

|---|---|---|

| AI Security & Prompt Firewall | 🛡️ Yes (Proxy & Rule-based DLP) | ❌ No |

| Audit Traces & Logs | 📝 Yes (Prompt recording & compliance) | ❌ No |

| Local LLM Prompt Sanitization | 🦙 Yes (Ollama integration) | ❌ No |

| Persistent Agent Memory | 🧠 Yes (survives restarts & sessions) | ❌ No |

| Token Optimization & Pruning | ✂️ Yes (LLM token compression) | ❌ No |

| Agentic Web Search | 🌐 Self-hosted SearXNG | ❌ Usually No |

| Capabilities | 🛠️ 31 specialized tools | ⛏️ 1 to 5 basic tools |

| Configuration | 🖥️ Web GUI Dashboard OR ⚙️ Manual | ⚙️ Manual JSON setup |

| Privacy | 🔒 100% Local Execution | 🔒 Varies |

- 🛡️ LLM Prompt Firewall: Intercept, sanitize, and perform prompt recording for all data leaving your system, ensuring robust AI security.

- 🔒 100% Privacy-First: Everything runs locally. Generate reliable audit traces for AI usage while your code and data never leave your machine unaudited.

- ✂️ LLM Token Optimization: Radically reduce your token burn with code structural compression (

token_optimizer) and relevance-based context trimming (context_pruner). - 💸 Truly Free: No accounts, no paywalls, no subscriptions.

- 🧠 Persistent Agent Memory: Let your AI remember facts and contexts across sessions seamlessly.

- ⚡ Plug & Play: Installs instantly via

uvxorpip. MCP configuration supports both manual JSON and GUI workflows.

📋 Table of Contents

- 🛡️ LLM Prompt Firewall & Sanitization

- 🧰 What's Inside the Toolkit

- 🚀 Quick Start Guide (Fastest Way to Install)

- 🔌 Connect to Your AI Client

- 🗂 Tool Directory — All 31 Tools

- 💻 Claude Code CLI Integration

- 🔎 Enabling Web Search

- 🎛 Overriding Configs & Advanced Usage

🛡️ LLM Prompt Firewall & Sanitization

The Prompt Firewall gives you complete control over what natural language data and code leaves your computer when using cloud-based AI coding assistants, delivering enterprise-grade AI security.

[!NOTE] The Firewall feature is currently tested and stable on macOS. Support for Linux and Windows is coming soon!

Key Features:

- Proxy Interception: Set up a local proxy to monitor all LLM traffic.

- Audit Traces for AI Usage: Automatically generate prompt recording logs. Get clear warnings at each prompt level showing exactly what was changed or redacted.

- 1-Click UI Setup: Install certificate -> start proxy. The magic starts immediately. (Note: Firewall setup is only made available on the UI to ensure smooth installation and operation).

- Local LLM Integration (Optional): Connect your local Ollama instance. Download open-source models to run completely local, pre-flight prompt sanitization, scanning your code to make it better and safer before sending it to a remote API.

- Rule-Based Sanitization: Easily define regex or grammar rules to block PII, AWS keys, database strings, or proprietary logic.

To enable the firewall and begin collecting audit traces for AI usage, start the GUI Webapp and navigate to the Firewall tab to install the certificate and start the proxy. For deeper technical architecture, read the officially provided AgenticStore Prompt Firewall Documentation or visit the main site at agenticstore.dev.

🧰 What's Inside the Toolkit (31 MCP Tools)

Equip your AI client with these modules containing 31 specific MCP tools, categorized strategically for maximum productivity:

- 🛡️ Local Only: 22 tools executing privately without any external API dependencies.

- ☁️ API Required: 7 tools strictly connecting to remote providers (like the GitHub API or Web connectors).

- 📝 Write Access: 6 tools capable of safely modifying your repos or local files.

- 👓 Read Only: 25 tools limited purely to context ingestion ensuring safety by default.

| Module | Purpose | Key Capabilities | Tools |

|---|---|---|---|

| 💻 Code (Codebase, GitHub, Security) | Codebase mastery & safety | Static analysis, GitHub PRs, OSV CVE scans, CodeQL scanning | 8 |

| 🌐 Data (Search & Crawl) | Internet access for Agents | Private web search (SearXNG) and deep web crawling | 2 |

| 🧠 Memory (Productivity & Storage) | Context manipulation & persistence | Save/read facts, optimize LLM tokens, context pruning | 16 |

| 🛠️ Tools & System Config | Configuration & OS monitoring | Check running host processes, tail system logs, discovery | 5 |

🚀 Quick Start Guide (Fastest Way to Install)

Pick the setup that best fits your workflow. MCP supports both manual configuration and GUI-based management setup. Don't know which to pick? Start with V0.

V0 — Python / uvx (Fastest start, no Docker needed)

1️⃣ Install via PyPI

pip install agentic-store-mcp --upgrade

(Or use uvx agentic-store-mcp if you have Astral's toolchain).

2️⃣ Run the MCP server

agentic-store-mcp

3️⃣ (Optional) Check your installation

agentic-store-mcp --version

4️⃣ Configure your AI Client

See the Connect to Your AI Client section to link it manually, or use the UI!

💡 Pro-Tip (Web Search): Want web search? Check out our Copy-Paste Config below. See Web Search Setup.

V1 — From GitHub Source (Latest Features)

1️⃣ Install directly from the repository

pip install git+https://github.com/agenticstore/agentic-store-mcp.git

(Or using uv: uvx --from git+https://github.com/agenticstore/agentic-store-mcp.git agentic-store-mcp)

2️⃣ Run the MCP server

agentic-store-mcp

3️⃣ Configure your AI Client

See the Connect to Your AI Client section to link it!

V2 — MCP Hub UI (Manage everything visually)

Forget manual JSON editing! Use our local web UI to:

- 🛡️ Firewall: Setup certificates and monitor all intercepted LLM prompts and audit logs for comprehensive prompt recording.

- 🔑 Connectors: Enter remote API keys securely (stored in OS keyring).

- 🛠️ Tools: Toggle which of the 31 tools to expose to the AI.

- 💻 Clients: Auto-generate configuration for Claude, Cursor, and Windsurf.

- 🧠 Memory: Manage persistent agent states, checkpoints, token optimization, and logs.

Start the Hub via Python:

# Ensure it is installed via pip

pip install agentic-store-mcp --upgrade

# Launch the web UI automatically bundled with the core package

agentic-store-webapp

Access it at: http://localhost:8765

🔌 Connect to Your AI Client

Because AgenticStore MCP supports both manual configuration and management via the GUI, you can manually add the configuration snippet to your respective client's config file if you prefer. Remember to restart the client after saving! For an in-depth guide on linking AI agents properly, visit the Client Connection Documentation on agenticstore.dev.

| Client | Config File Path |

|---|---|

| Claude Desktop (Mac) | ~/Library/Application Support/Claude/claude_desktop_config.json |

| Claude Desktop (Win) | %APPDATA%\Claude\claude_desktop_config.json |

| Cursor | ~/.cursor/mcp.json |

| Windsurf | ~/.codeium/windsurf/mcp_config.json |

| VS Code | Appends to your VS Code settings.json under MCP extension config |

Standard Copy-Paste Config

Basic Setup:

{

"mcpServers": {

"agentic-store-mcp": {

"command": "agentic-store-mcp",

"args": []

}

}

}

With Web Search (SearXNG) enabled:

{

"mcpServers": {

"agentic-store-mcp": {

"command": "agentic-store-mcp",

"args": [],

"env": {

"SEARXNG_URL": "http://localhost:8080"

}

}

}

}

🗂 Tool Directory — All 31 Tools

📝 Code Module (8 tools)

Codebase Analysis

Analyze, search, and navigate your codebase flawlessly.

| Tool | Capability |

|---|---|

analyze_commits | Analyze git commit history context (authors, frequency, patterns). |

get_file | Fetch and read file syntax content straight from GitHub repositories. |

python_lint_checker | Runs static analysis on Python files (finds bugs, unused imports, structural style). |

search_code | Blazing-fast full-text code pattern search across local files and GitHub. |

Remote Integration

(Requires a GitHub Personal Access Token. Set via GITHUB_TOKEN or MCP Hub).

| Tool | Capability |

|---|---|

create_pr | Automatically open new internal Pull Requests on GitHub. |

get_repo_info | Fetch GitHub repo metadata (stars, forks, contributors). |

manage_issue | Create, update, comment on, and close GitHub issues. |

Security & Auditing

Agent-driven DevSecOps & Supply Chain Verification.

| Tool | Capability |

|---|---|

code_scanning_alerts | Retrieve CodeQL and Semgrep security findings from GitHub. |

dependabot_alerts | Fetch automated dependency vulnerability alerts via Dependabot integration. |

dependency_audit | Scan packages (requirements.txt, package.json, go.mod) dynamically against the OSV CVE database. |

repo_scanner | Scan for leaked secrets (API keys), PII leaks, and enforce .gitignore compliance. |

🌐 Data Module (2 tools)

| Tool | Capability |

|---|---|

agentic_web_crawl | Extract clean markdown text, headings, and SEO metadata signals from any URL. |

agentic_web_search | Conduct live semantic web searches safely via self-hosted SearXNG. |

🧠 Memory Module (16 tools)

Persistent memory and token reduction guarantees LLM agents can hand off work across massive repos over massive chat sessions safely.

Productivity & Token Optimization

| Tool | Capability |

|---|---|

token_optimizer | NEW: Radically compress code/text before sending to the LLM. Supports three modes (compress, summarize, both) across languages (Python, JS/TS, Go, Rust, Java, C/C++, Shell) by stripping non-functional strings and surfacing structural outlines. Auto-returns saved token metrics. |

context_pruner | NEW: Recommends exactly which files/data to drop to reduce massive token windows by scoring each item by keyword overlap against your active task description. Never wastes network context overhead. |

restore_session | Load your entire historical workspace context back from a checkpoint. |

spinup_memory | Initialize a new project memory directory gracefully. |

update_change_log | Append structured semantic release notes into CHANGELOG.md. |

update_learnings | Log technical discoveries into a perpetual, searchable markdown repository. |

update_milestones | Track exact milestone progression seamlessly as development scales. |

update_plan | Edit, append, or overhaul your central architectural plan.md. |

Storage Primitives

| Tool | Capability |

|---|---|

memory_checkpoint | Save a total snapshot of conversational states, decisions, and immediate plans. |

memory_log | Append real-time timestamps logs of session activity. |

memory_read | Fetch structured facts efficiently. |

memory_restore | Read and restore state configurations from stored checkpoints. |

memory_search | Full-text contextual search indexing memory databases perfectly. |

memory_write | Commit persistent JSON facts directly outliving standard LLM chat windows. |

🛠️ Tools Module (5 tools)

| Tool | Capability |

|---|---|

configure | Dynamically override runtime configurations and API connectors entirely. |

list_processes | NEW: Instantly query whether specific software systems are successfully running executing pgrep & lsof bounds across 11 integrated well-known endpoints (e.g Docker, Redis, Postgres, MongoDB, Node, Celery). Return PIDs correctly natively. |

tail_system_logs | NEW: Smart and efficient log file trailing algorithm (seek-from-end). Never crash context windows on gigabyte log files; reads the minimal context necessary by filtering directly by criteria like 'error' and 'exception' bounding memory footprints cleanly to 1000 lines. |

tool_search | Retrieve a detailed directory of every available active MCP tool. |

💻 Claude Code CLI Integration

AgenticStore MCP is fully compatible with Claude Code explicitly for terminal workflows, allowing developers full access to the 31 command capacities directly interacting via the command line.

Safe CLI Execution Modes

Automatically install the application directly into your ~/.claude/settings.json seamlessly resolving connection bindings. The CLI handles both traditional operation and state-of-the-art intercepting proxy verification cleanly via a dynamic CLI command structure.

Default Mode: (For direct API calling — connects to normal Anthropic models purely without firewall tracking):

agentic-store-mcp --install-claude

- Explicitly registers to the user's

~/.claude/settings.jsonlocally. - Actively forces

launchctl unsetenvcleanup dropping any staleANTHROPIC_BASE_URLrouting bindings to assure 100% stable connection directly to Anthropic standard APIs. - Removes proxy-forcing traces dynamically from your target system environment

~/.zshrc/~/.bash_profile— new terminals always start completely clean.

Firewall Mode: (Pre-validates proxy stability mapping traffic efficiently directly into Prompt Recoding security boundaries protecting enterprise secrets):

agentic-store-mcp --install-claude --firewall-mode

- First natively verifies the AgenticStore firewall proxy is correctly listening across Port 8766 before touching configuration trees preventing deployment breakages completely.

- Formally injects

launchctl setenvrouting instructions properly writing nc-guarded shell profile blocks into Node.js TLSANTHROPIC_BASE_URLinterception directly into Claude.

(Note: --firewall-mode explicitly refuses to run unaccompanied. It requires --install-claude actively passed alongside it).

Manual Configuration

Run claude mcp add agentic-store-mcp "agentic-store-mcp".

🔎 Enabling Web Search

To give your agent internet access, agentic_web_search uses a private SearXNG instance.

If you'd like to use a remote API or host your own container, simply append its URL.

Pass the environment variable to your AI Client:

{

"mcpServers": {

"agentic-store-mcp": {

"command": "agentic-store-mcp",

"args": [],

"env": {

"SEARXNG_URL": "http://localhost:8080"

}

}

}

}

🎛 Overriding Configs & Advanced Usage

Overriding configs is related to your MCP setup. MCP supports both manual and GUI setup, so you can filter exactly what tools get loaded via environment variables if integrating deeply without the web GUI.

# Debug: List what would be loaded and exit

agentic-store-mcp --list

Note: The LLM Prompt Firewall is exclusively configured via the UI. The firewall is only made available on the UI to ensure smooth setup, robust proxy interception, and seamless prompt recording out of the box.

🛠️ Troubleshooting

Internet Disruption After Proxy Use When dealing with the LLM Prompt Firewall proxy, if the server is terminated abruptly, there could be an internet disruption on your machine due to residual system proxy settings.

If you lose internet connection after a crash, run this command in your terminal to restore your proxy to default:

networksetup -setsecurewebproxystate "Wi-Fi" off

🤝 Contributing & Community

Contributions are what make the open-source community such an amazing place to learn, inspire, and create. Any contributions you make are greatly appreciated.

If you'd like to contribute code or improvements, please fork the repository and create a Pull Request.

⭐ If this toolkit saved you 10 hours of configuration, please give us a star to help others find it!

📜 License & Support

This project is licensed under the MIT License — free to use, modify, and distribute. See LICENSE for details.

⭐ Manage Everything Easier via the Webapp: agentic-store-webapp

Built with ❤️ by AgenticStore.dev — Open-source AI tooling for everyone.

🏷️ Core Technologies & Ecosystem

AgenticStore is built for the Model Context Protocol (MCP Server) ecosystem to provide robust LLM Security, a Prompt Firewall, and proactive AI Data Privacy. It supports comprehensive Data Loss Prevention (DLP) through Prompt Sanitization, Prompt Recording, and Audit Traces for AI Usage. Designed for Autonomous Agents and AI Coding Assistants like Claude Code MCP Integration, Cursor IDE MCP, and Windsurf.

We tackle the hardest scaling problems for modern LLMs natively via LLM Token Compression, context window offloading natively via Context Pruning, structured code processing via Token Optimization, and deep Persistent Agent Memory. Combining deep systemic oversight spanning AI DevSecOps, OSV CVE Dependency Scans, Static Code Analysis, local OS Process Management (via list_processes), dynamic streaming Log File Tailing, and private Agentic Web Search via SearXNG. Scale locally executing reliably with Ollama Integration for unbreachable AI Auditing Requirements.