Locust MCP Server

An MCP server for running Locust load tests. Configure test parameters like host, users, and spawn rate via environment variables.

🚀 ⚡️ locust-mcp-server

A Model Context Protocol (MCP) server implementation for running Locust load tests. This server enables seamless integration of Locust load testing capabilities with AI-powered development environments.

✨ Features

- Simple integration with Model Context Protocol framework

- Support for headless and UI modes

- Configurable test parameters (users, spawn rate, runtime)

- Easy-to-use API for running Locust load tests

- Real-time test execution output

- HTTP/HTTPS protocol support out of the box

- Custom task scenarios support

🔧 Prerequisites

Before you begin, ensure you have the following installed:

- Python 3.13 or higher

- uv package manager (Installation guide)

📦 Installation

- Clone the repository:

git clone https://github.com/qainsights/locust-mcp-server.git

- Install the required dependencies:

uv pip install -r requirements.txt

- Set up environment variables (optional):

Create a

.envfile in the project root:

LOCUST_HOST=http://localhost:8089 # Default host for your tests

LOCUST_USERS=3 # Default number of users

LOCUST_SPAWN_RATE=1 # Default user spawn rate

LOCUST_RUN_TIME=10s # Default test duration

🚀 Getting Started

- Create a Locust test script (e.g.,

hello.py):

from locust import HttpUser, task, between

class QuickstartUser(HttpUser):

wait_time = between(1, 5)

@task

def hello_world(self):

self.client.get("/hello")

self.client.get("/world")

@task(3)

def view_items(self):

for item_id in range(10):

self.client.get(f"/item?id={item_id}", name="/item")

time.sleep(1)

def on_start(self):

self.client.post("/login", json={"username":"foo", "password":"bar"})

- Configure the MCP server using the below specs in your favorite MCP client (Claude Desktop, Cursor, Windsurf and more):

{

"mcpServers": {

"locust": {

"command": "/Users/naveenkumar/.local/bin/uv",

"args": [

"--directory",

"/Users/naveenkumar/Gits/locust-mcp-server",

"run",

"locust_server.py"

]

}

}

}

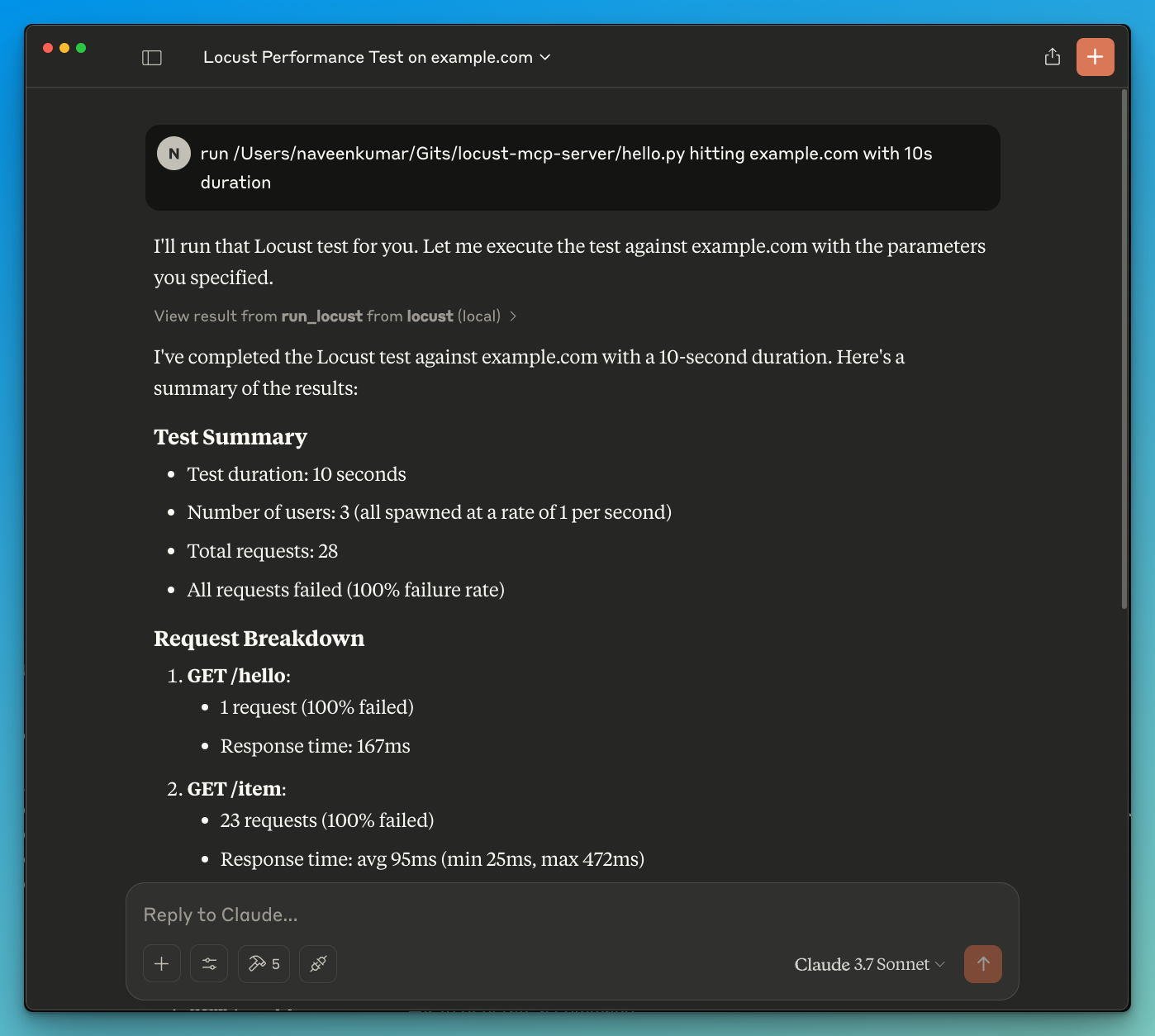

- Now ask the LLM to run the test e.g.

run locust test for hello.py. The Locust MCP server will use the following tool to start the test:

run_locust: Run a test with configurable options for headless mode, host, runtime, users, and spawn rate

📝 API Reference

Run Locust Test

run_locust(

test_file: str,

headless: bool = True,

host: str = "http://localhost:8089",

runtime: str = "10s",

users: int = 3,

spawn_rate: int = 1

)

Parameters:

test_file: Path to your Locust test scriptheadless: Run in headless mode (True) or with UI (False)host: Target host to load testruntime: Test duration (e.g., "30s", "1m", "5m")users: Number of concurrent users to simulatespawn_rate: Rate at which users are spawned

✨ Use Cases

- LLM powered results analysis

- Effective debugging with the help of LLM

🤝 Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

📄 License

This project is licensed under the MIT License - see the LICENSE file for details.

संबंधित सर्वर

Alpha Vantage MCP Server

प्रायोजकAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

Grey Hack MCP Server

A Grey Hack server for Cursor IDE, providing GitHub code search, Greybel-JS transpilation, API validation, and script generation.

MasterGo Magic MCP

Connects MasterGo design tools with AI models, allowing them to retrieve DSL data directly from design files.

Stack AI

Build and deploy AI applications using the Stack AI platform.

git-mcp

A Git MCP server that doesn't suck

BaseMcpServer

A minimal, containerized base for building MCP servers with the Python SDK, featuring a standardized Docker image and local development setup.

CVE MCP Server

A production-grade Model Context Protocol (MCP) server that turns Claude into a full-spectrum security analyst. Instead of juggling 15+ browser tabs across NVD, EPSS, CISA KEV, Shodan, VirusTotal, and GreyNoise, ask Claude one question and get correlated intelligence in seconds. Built with Python, FastMCP, httpx, aiosqlite, Pydantic v2, and defusedxml.

MCP JS Debugger

Debug JavaScript and TypeScript applications through the Chrome DevTools Protocol with full source map support.

MCP-Haskell

A complete Model Context Protocol (MCP) implementation for Haskell, supporting both StdIO and HTTP transport.

Image Generation

Generate images from text using the Stable Diffusion WebUI API (ForgeUI/AUTOMATIC-1111).

AgentMesh

AI agent governance middleware: policy enforcement, cryptographic audit trails, Trust Score, DLP, EU AI Act compliance