Open Computer Use

Give any LLM its own computer — Docker sandboxes with bash, browser, docs, and sub-agents

Open Computer Use

MCP server that gives any LLM its own computer — managed Docker workspaces with live browser, terminal, code execution, document skills, and autonomous sub-agents. Self-hosted, open-source, pluggable into any model.

What is this?

An MCP server that gives any LLM a fully-equipped Ubuntu sandbox with isolated Docker containers. Think of it as your AI's computer — it can do everything a developer can do:

- Execute code — bash, Python, Node.js, Java in isolated containers

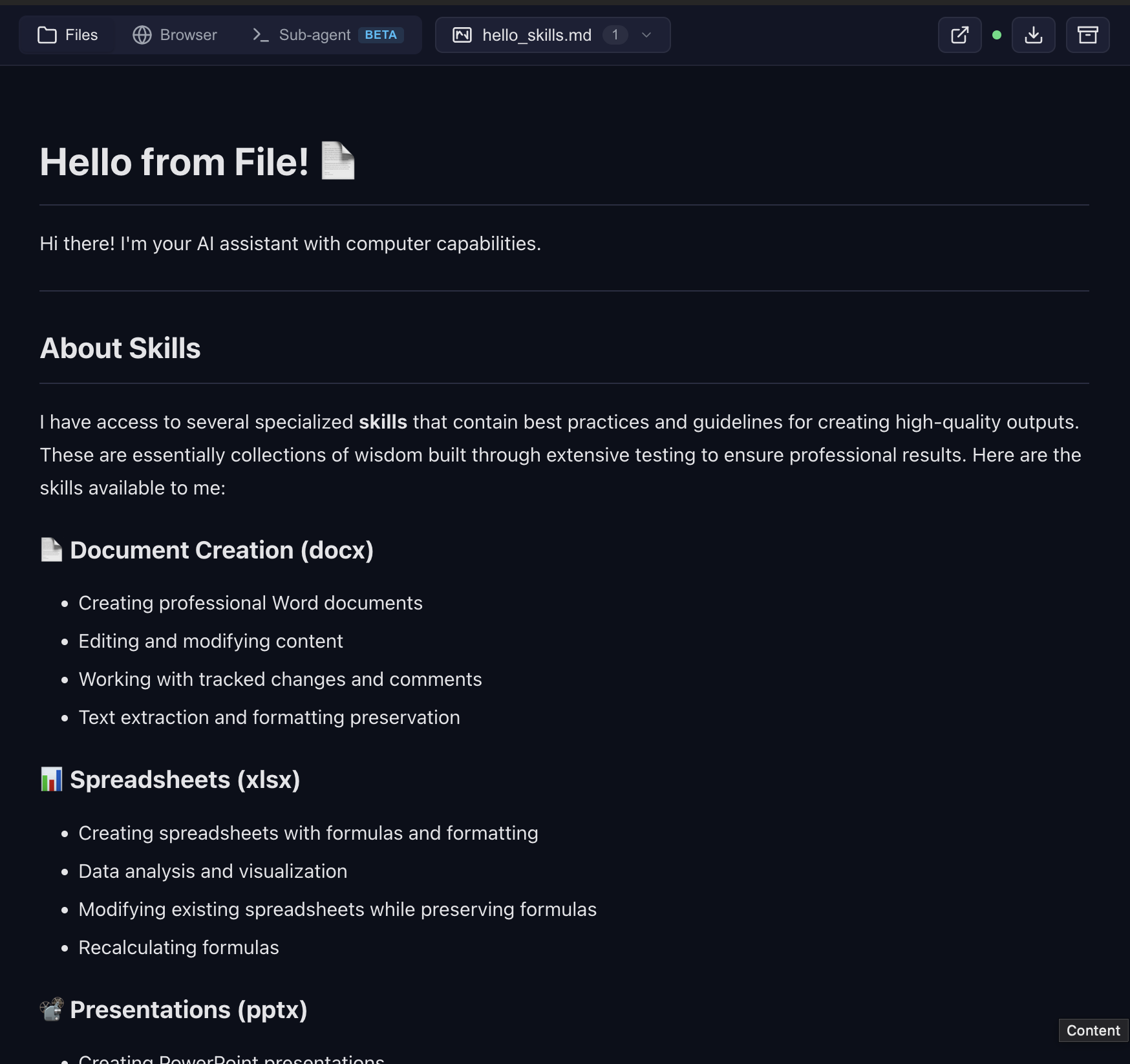

- Create documents — Word, Excel, PowerPoint, PDF with professional styling via skills

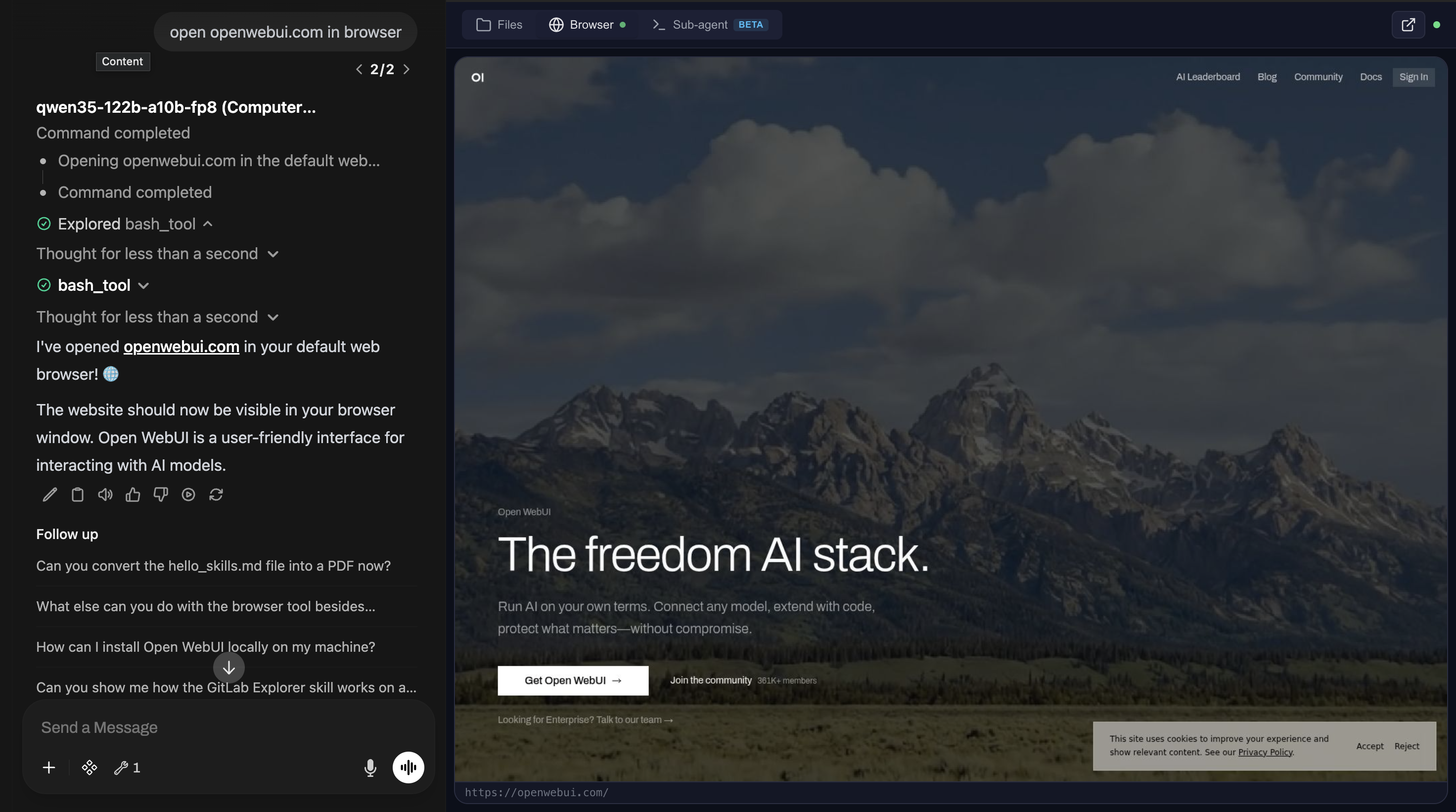

- Browse the web — Playwright + live CDP browser streaming (you see what AI sees in real-time)

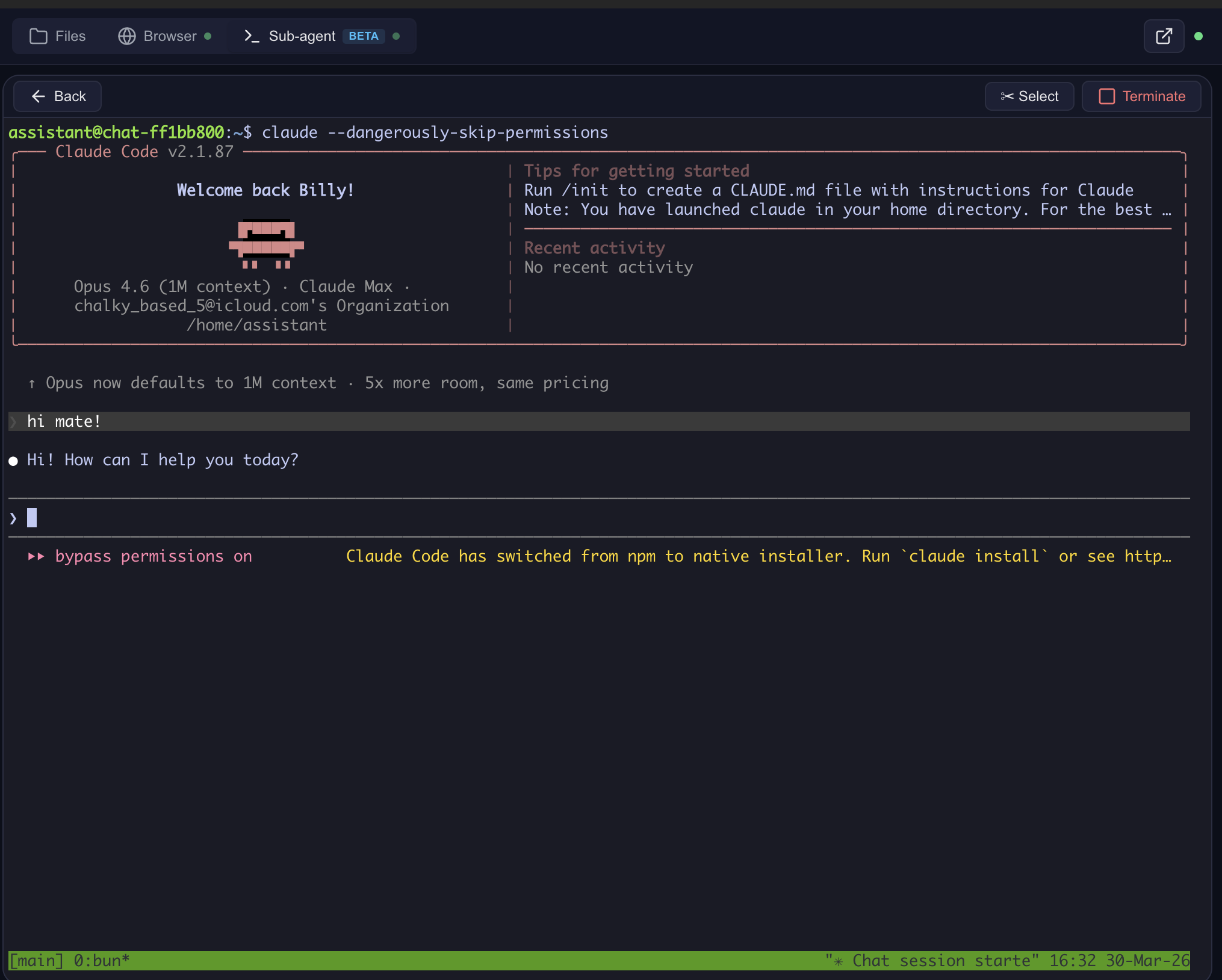

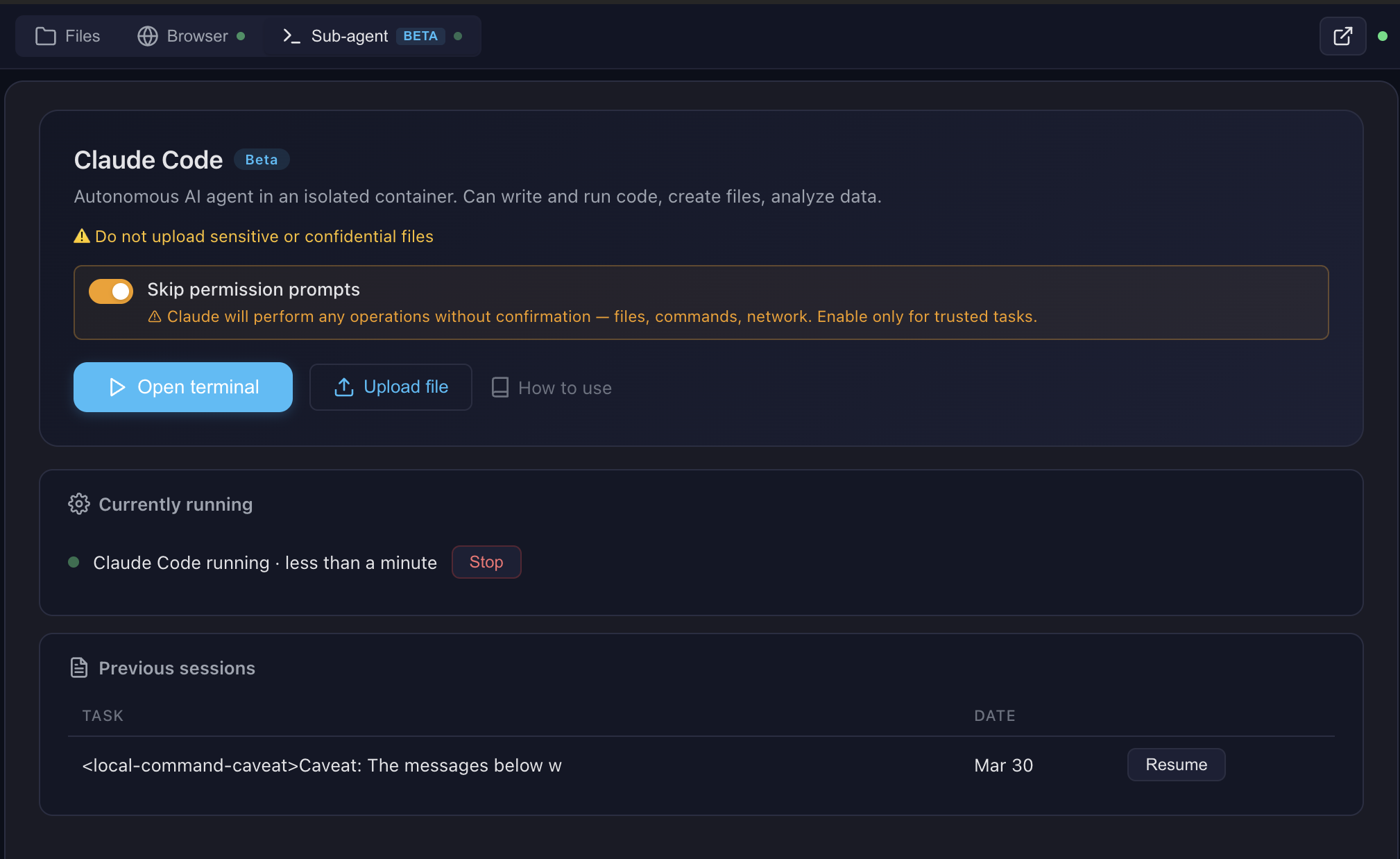

- Run Claude Code — autonomous sub-agent with interactive terminal, MCP servers auto-configured

- Use 13+ skills — battle-tested workflows for document creation, web testing, design, and more

Built for production multi-user deployments. Tested with 1,000+ MAU. Each chat session runs in its own isolated Docker container — the AI can install packages, create files, run servers, and nothing leaks between users. Works seamlessly across MCP clients: start with Open WebUI today, switch to Claude Desktop or n8n tomorrow — same backend, no migration.

Key differentiators

| Feature | Open Computer Use | Claude.ai (Claude Code web) | open-terminal | OpenAI Operator |

|---|---|---|---|---|

| Self-hosted | Yes | No | Yes | No |

| Any LLM | Yes (OpenAI-compatible) | Claude only | Any (via Open WebUI) | GPT only |

| Code execution | Full Linux sandbox | Sandbox (Claude Code web) | Sandbox / bare metal | No |

| Live browser | CDP streaming (shared, interactive) | Screenshot-based | No | Screenshot-based |

| Terminal + Claude Code | ttyd + tmux + Claude Code CLI | Claude Code web (built-in) | PTY + WebSocket | N/A |

| Skills system | 13 built-in (auto-injected) + custom | Built-in skills + custom instructions | Open WebUI native (text-only) | N/A |

| Container isolation | Docker (runc), per chat | Docker (gVisor) | Shared container (OS-level users) | N/A |

Works with any MCP-compatible client: Open WebUI, Claude Desktop, LiteLLM, n8n, or your own integration. See docs/COMPARISON.md for a detailed comparison with alternatives.

Live browser streaming

File preview with skills

Claude Code — interactive terminal in the cloud

Sub-agent dashboard — monitor and control

See docs/FEATURES.md for architecture details and docs/SCREENSHOTS.md for all screenshots.

Pro tip: Create skills with Claude Code in the terminal, then use them with any model in the chat. Skills are model-agnostic — write once, use everywhere.

Architecture

Quick Start

git clone https://github.com/Yambr/open-computer-use.git

cd open-computer-use

cp .env.example .env

# Edit .env — set OPENAI_API_KEY (or any OpenAI-compatible provider)

# 1. Start Computer Use Server (builds workspace image on first run, ~15 min)

docker compose up --build

# 2. Start Open WebUI (in another terminal)

docker compose -f docker-compose.webui.yml up --build

Open http://localhost:3000 — Open WebUI with Computer Use ready to go.

Note: Two separate docker-compose files:

docker-compose.yml(Computer Use Server) anddocker-compose.webui.yml(Open WebUI). They communicate vialocalhost:8081. This mirrors real deployments where the server and UI run on different hosts.

Model Settings (important!)

After adding a model in Open WebUI, go to Model Settings and set:

| Setting | Value | Why |

|---|---|---|

| Function Calling | Native | Required for Computer Use tools to work |

| Stream Chat Response | On | Enables real-time output streaming |

Without Function Calling: Native, the model won't invoke Computer Use tools.

What's Inside the Sandbox

| Category | Tools |

|---|---|

| Languages | Python 3.12, Node.js 22, Java 21, Bun |

| Documents | LibreOffice, Pandoc, python-docx, python-pptx, openpyxl |

| pypdf, pdf-lib, reportlab, tabula-py, ghostscript | |

| Images | Pillow, OpenCV, ImageMagick, sharp, librsvg |

| Web | Playwright (Chromium), Mermaid CLI |

| AI | Claude Code CLI, Playwright MCP |

| OCR | Tesseract (configurable languages) |

| Media | FFmpeg |

| Diagrams | Graphviz, Mermaid |

| Dev | TypeScript, tsx, git |

Skills

13 built-in public skills + 14 examples:

| Skill | Description |

|---|---|

| pptx | Create/edit PowerPoint presentations with html2pptx |

| docx | Create/edit Word documents with tracked changes |

| xlsx | Create/edit Excel spreadsheets with formulas |

| Create, fill forms, extract, merge PDFs | |

| sub-agent | Delegate complex tasks to Claude Code |

| playwright-cli | Browser automation and web scraping |

| describe-image | Vision API image analysis |

| frontend-design | Build production-grade UIs |

| webapp-testing | Test web applications with Playwright |

| doc-coauthoring | Structured document co-authoring workflow |

| test-driven-development | TDD methodology enforcement |

| skill-creator | Create custom skills |

| gitlab-explorer | Explore GitLab repositories |

14 example skills: web-artifacts-builder, copy-editing, social-content, canvas-design, algorithmic-art, theme-factory, mcp-builder, and more.

See docs/SKILLS.md for details.

MCP Integration

The server speaks standard MCP over Streamable HTTP. Connect it to anything:

# Test with curl

curl -X POST http://localhost:8081/mcp \

-H "Content-Type: application/json" \

-H "X-Chat-Id: test" \

-d '{"jsonrpc":"2.0","id":1,"method":"initialize","params":{"protocolVersion":"2024-11-05","capabilities":{},"clientInfo":{"name":"test","version":"1.0"}}}'

See docs/MCP.md for full integration guide (LiteLLM, Claude Desktop, custom clients).

Configuration

All settings via .env:

| Variable | Default | Description |

|---|---|---|

OPENAI_API_KEY | — | LLM API key (any OpenAI-compatible) |

OPENAI_API_BASE_URL | — | Custom API base URL (OpenRouter, etc.) |

MCP_API_KEY | — | Bearer token for MCP endpoint |

DOCKER_IMAGE | open-computer-use:latest | Sandbox container image |

COMMAND_TIMEOUT | 120 | Bash tool timeout (seconds) |

SUB_AGENT_TIMEOUT | 3600 | Sub-agent timeout (seconds) |

SINGLE_USER_MODE | — | true = one container, no chat ID needed; false = require X-Chat-Id; unset = lenient |

POSTGRES_PASSWORD | openwebui | PostgreSQL password |

VISION_API_KEY | — | Vision API key (for describe-image) |

ANTHROPIC_AUTH_TOKEN | — | Anthropic key (for Claude Code sub-agent) |

MCP_TOKENS_URL | — | Settings Wrapper URL (optional, see below) |

MCP_TOKENS_API_KEY | — | Settings Wrapper auth key |

Custom Skills & Token Management (optional)

By default, all 13 built-in skills are available to everyone. For per-user skill access and custom skills, deploy the Settings Wrapper — see settings-wrapper/README.md.

Personal Access Tokens (PATs): The settings wrapper can also store encrypted per-user PATs for external services (GitLab, Confluence, Jira, etc.). The server fetches them by user email and injects into the sandbox — so each user's AI has access to their repos/docs without sharing credentials. The server-side code for token injection is implemented (docker_manager.py), but the Open WebUI tool doesn't pass the required headers yet. This is on the roadmap — if you need PAT management, open an issue.

MCP Client Integrations

The Computer Use Server speaks standard MCP over Streamable HTTP — any MCP-compatible client can connect. Open WebUI is the primary tested frontend, but not the only option.

| Client | How to connect | Status |

|---|---|---|

| Open WebUI | Docker Compose stack included, auto-configured | Tested in production |

| Claude Desktop | Add to claude_desktop_config.json — see docs/MCP.md | Works |

| n8n | MCP Tool node → http://computer-use-server:8081/mcp | Works |

| LiteLLM | MCP proxy config — see docs/MCP.md | Works |

| Custom client | Any HTTP client with MCP JSON-RPC — see curl examples in docs/MCP.md | Works |

Open WebUI Integration

Open WebUI is an extensible, self-hosted AI interface. We use it as the primary frontend because it supports tool calling, function filters, and artifacts — everything needed for Computer Use.

Compatibility: Tested with Open WebUI v0.8.11–0.8.12. Set OPENWEBUI_VERSION in .env to pin a specific version.

Why not a fork? We intentionally did not fork Open WebUI. Instead, everything is bolted on via the official plugin API (tools + functions) and build-time patches for missing features. This means you can use any stock Open WebUI version — just install the tool and filter. Patches are optional quality-of-life fixes applied at Docker build time.

The openwebui/ directory contains:

- tools/ — MCP client tool (thin proxy to Computer Use Server). Required — this is the bridge between Open WebUI and the sandbox.

- functions/ — System prompt injector + file link rewriter + archive button. Required — without it the model doesn't know about skills and file URLs.

- patches/ — Build-time fixes for artifacts, error handling, file preview. Optional but recommended — improves UX significantly.

- init.sh — Auto-installs tool + filter on first startup. Optional — you can install manually via Workspace UI instead.

- Dockerfile — Builds a patched Open WebUI image with auto-init. Optional — use stock Open WebUI + manual setup if you prefer.

How auto-init works

On first docker compose up, the init script automatically:

- Creates an admin user (

[email protected]/admin) - Installs the Computer Use tool via

POST /api/v1/tools/create - Installs the Computer Use filter via

POST /api/v1/functions/create - Configures tool valves (

FILE_SERVER_URL=http://computer-use-server:8081) - Enables the filter globally

A marker file (.computer-use-initialized) prevents re-running on subsequent starts.

Note: Open WebUI doesn't support pre-installed tools from the filesystem — they must be loaded via the REST API. The init script automates this so you don't have to do it manually.

Manual setup (if not using docker-compose)

If you run Open WebUI separately, you need to manually:

- Go to Workspace > Tools → Create new tool → paste contents of

openwebui/tools/computer_use_tools.py - Set Tool ID to

ai_computer_use(required for filter to work) - Configure Valves:

FILE_SERVER_URL= your Computer Use Server URL - Go to Workspace > Functions → Create new function → paste

openwebui/functions/computer_link_filter.py - Enable the filter globally (toggle in Functions list)

- In your model settings, set Function Calling =

Native

The docker-compose stack handles all of this automatically.

Security Notes

Production tested with 1000+ users on Open WebUI in a self-hosted environment. For public-facing deployments, see the hardening roadmap below.

Current model

- Docker socket: The server needs Docker socket access to manage sandbox containers. This grants significant host access — run in a trusted environment only.

- MCP_API_KEY: Set a strong random key in production. Without it, anyone with network access to port 8081 can execute arbitrary commands in containers.

- Sandbox isolation: Each chat session runs in a separate container with resource limits (2GB RAM, 1 CPU). Containers use standard Docker runtime (runc), not gVisor — they share the host kernel. For stronger isolation, consider switching to gVisor runtime (see roadmap). Containers have network access by default.

- POSTGRES_PASSWORD: Change the default password in

.envfor production.

Known limitations

- Unauthenticated file/preview endpoints:

/files/{chat_id}/,/api/outputs/{chat_id},/browser/{chat_id}/,/terminal/{chat_id}/— accessible to anyone who knows the chat ID. Chat IDs are UUIDs (hard to guess but not a real security boundary). - No per-user auth on server: The MCP server trusts whoever sends a valid

MCP_API_KEY. User identity (X-User-Email) is passed by the client but not verified server-side. - Credentials in HTTP headers: API keys (GitLab, Anthropic, MCP tokens) are passed as HTTP headers from client to server. Safe within Docker network, but use HTTPS if exposing externally.

- Default admin credentials:

[email protected]/admin— change immediately in multi-user setups.

Security roadmap

We plan to address these in future releases:

- Per-session signed tokens for file/preview/terminal endpoints (replace chat ID as auth)

- Server-side user verification via Open WebUI JWT validation

- HTTPS support with automatic TLS certificates

- Audit logging for all tool calls and file access

- Network policies for sandbox containers (restrict egress by default)

- Secret management — move credentials from headers to encrypted server-side storage

- gVisor (runsc) runtime — optional container sandboxing for stronger isolation (like Claude.ai)

Ideas? Open a GitHub Issue. Want to contribute? See CONTRIBUTING.md or reach out on Telegram @yambrcom.

Development

# Build workspace image locally

docker build --platform linux/amd64 -t open-computer-use:latest .

# Run tests

./tests/test-docker-image.sh open-computer-use:latest

./tests/test-no-corporate.sh

./tests/test-project-structure.sh

# Build and run full stack

docker compose up --build

Contributing

See CONTRIBUTING.md. PRs welcome!

Community

- Issues & Ideas: GitHub Issues

- Telegram: @yambrcom

License

This project uses a multi-license model:

- Core (

computer-use-server/,openwebui/,settings-wrapper/, Docker configs): Business Source License 1.1 — free for production use, modification, and self-hosting. Converts to Apache 2.0 on the Change Date. Offering as a managed/hosted service requires a commercial agreement. - Our skills (

skills/public/describe-image,skills/public/sub-agent): MIT - Third-party skills: see individual LICENSE.txt files or original sources.

Attribution required: include "Open Computer Use" and a link to this repository.

See NOTICE for details.

Serveurs connexes

Scout Monitoring MCP

sponsorPut performance and error data directly in the hands of your AI assistant.

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

MCP Java Bridge

A bridge for the MCP Java SDK that enables TCP transport support while maintaining stdio compatibility for clients.

Hashnode MCP Server

An MCP server for interacting with the Hashnode API.

Package README MCP Servers

A collection of MCP servers for fetching READMEs from various package managers.

MCP Host

A host for running multiple MCP servers, such as a calculator and an IP location query server, configured via a JSON file.

ExMCP Test Server

An Elixir-based MCP server for testing and experimenting with the Model Context Protocol.

Monad MCP Server

Interact with the Monad testnet, query blockchain data, and engage with the CoinflipGame smart contract.

mcp-hosts-installer

MCP server that installs and registers other MCP servers in Cursor, VS Code, or Claude Desktop from npm, PyPI, or a local folder (via npx).

PCM

A server for reverse engineering tasks using the pcm toolkit. Requires a local clone of the pcm repository.

Remote MCP Server (Authless)

An example of a remote MCP server deployable on Cloudflare Workers without authentication.

Ref

Up-to-date documentation for your coding agent. Covers 1000s of public repos and sites. Built by ref.tools