Litmus MCP Server

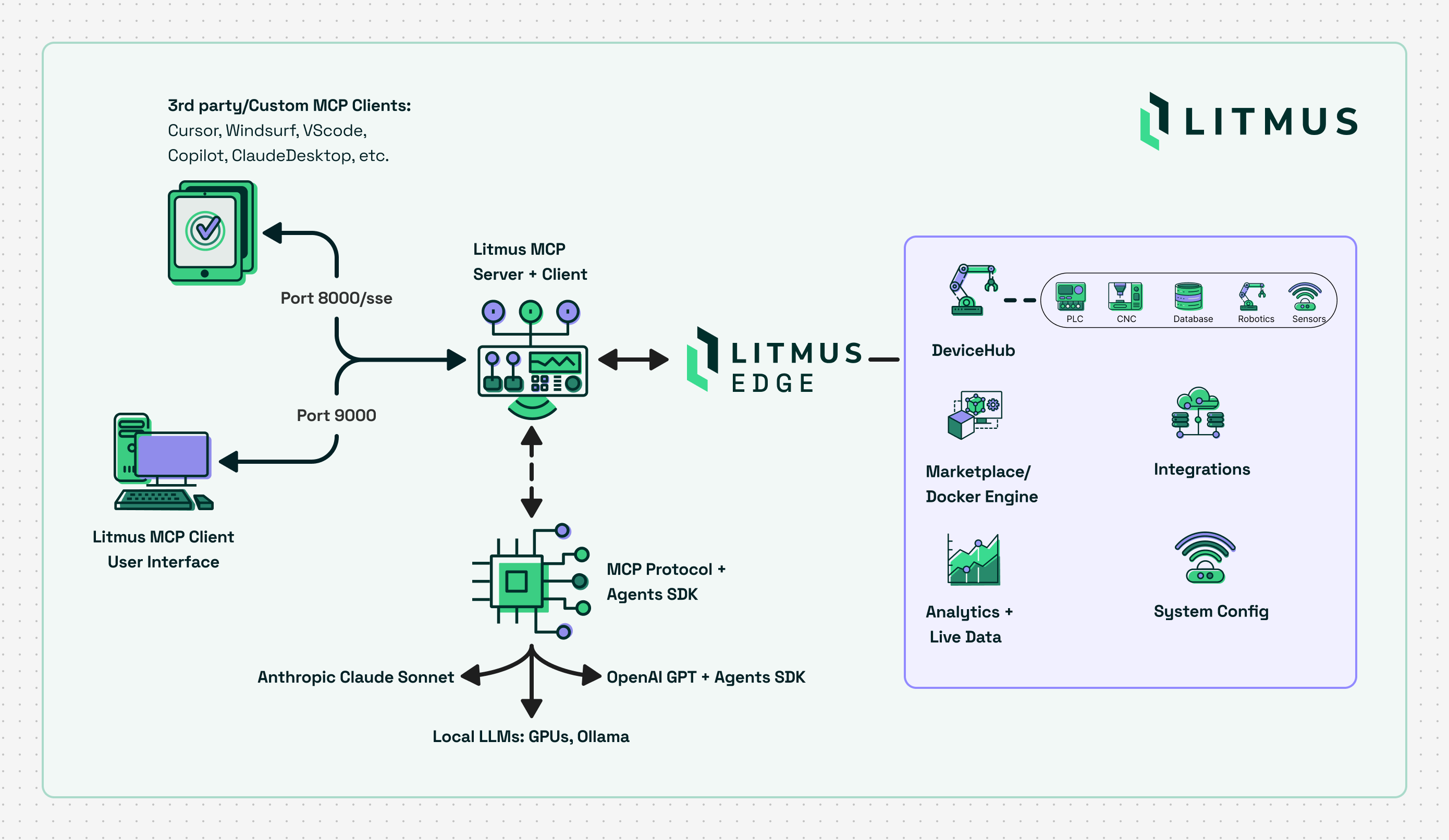

Enables LLMs and intelligent systems to interact with Litmus Edge for device configuration, monitoring, and management.

Litmus MCP Server

The official Litmus Automation Model Context Protocol (MCP) Server enables LLMs and intelligent systems to interact with Litmus Edge for device configuration, monitoring, and management. It is built on top of the MCP SDK and adheres to the Model Context Protocol spec.

Table of Contents

Quick Launch

Start an HTTP SSE MCP Server using Docker

Run the server in Docker (HTTP SSE only)

docker run -d --name litmus-mcp-server -p 8000:8000 ghcr.io/litmusautomation/litmus-mcp-server:latest

NOTE: The Litmus MCP Server is built for linux/AMD64 platforms. If running in Docker on ARM64, specify the AMD64 platform type by including the --platform argument:

docker run -d --name litmus-mcp-server --platform linux/amd64 -p 8000:8000 ghcr.io/litmusautomation/litmus-mcp-server:main

Web UI

The Docker image includes a built-in chat interface that lets you interact with Litmus Edge using natural language — no MCP client configuration required.

Start the server with both ports exposed:

docker run -d --name litmus-mcp-server \

-p 8000:8000 -p 9000:9000 \

-e ANTHROPIC_API_KEY=<key> \

ghcr.io/litmusautomation/litmus-mcp-server:latest

:9000— Web UI (chat interface). Openhttp://localhost:9000in your browser, add a Litmus Edge instance via the config page, and start chatting.:8000— SSE endpoint for external MCP clients (Claude Desktop, Cursor, VS Code, etc.) — still available as normal.

Supported LLM providers: Anthropic Claude, OpenAI, and Google Gemini. Provide one or more keys at startup (ANTHROPIC_API_KEY, OPENAI_API_KEY, GEMINI_API_KEY) or enter them through the Web UI's setup screen. The active provider and model are switchable from the Web UI's config page at any time.

Multiple Litmus Edge instances: The Web UI lets you register and switch between multiple Litmus Edge devices from a single MCP server. Each instance keeps its own URL and OAuth2 credentials; the active instance's credentials are mirrored into EDGE_URL / EDGE_API_CLIENT_ID / EDGE_API_CLIENT_SECRET automatically. Manage instances under Config → Litmus Edge Instances, or check status per-instance from the Health page.

Live Litmus documentation as MCP Resources: The server exposes litmus://docs/<section> URIs that fetch live content from docs.litmus.io on demand, so MCP-aware clients can pull current reference material directly into the model's context.

If you deploy the MCP server and web client on separate hosts, set MCP_SSE_URL to point the web client at the server:

-e MCP_SSE_URL=http://<mcp-server-host>:8000/sse

Persistent Configuration

By default, configuration saved through the Web UI (API keys, Litmus Edge instances, model preferences, connection settings) is written to .env inside the container and is lost when the container is removed.

To retain configuration across container restarts and replacements, mount a host file over /app/.env:

# One-time setup — the host file must exist before docker run

mkdir -p /opt/litmus-mcp

touch /opt/litmus-mcp/.env

# Run with the volume mount

docker run -d --name litmus-mcp-server \

-p 8000:8000 -p 9000:9000 \

-v /opt/litmus-mcp/.env:/app/.env \

ghcr.io/litmusautomation/litmus-mcp-server:latest

Any configuration you save in the UI is written to /opt/litmus-mcp/.env on the host. A new container started with the same -v flag will pick it up automatically on startup.

Note: The host-side file must be created with

touchbefore running the container. If it does not exist, Docker creates a directory at that path and the application will fail to write configuration.

Docker Compose equivalent:

services:

litmus-mcp-server:

image: ghcr.io/litmusautomation/litmus-mcp-server:latest

ports:

- "8000:8000"

- "9000:9000"

volumes:

- /opt/litmus-mcp/.env:/app/.env

Claude Code CLI

Run Claude from a directory that includes a configuration file at ~/.claude/mcp.json:

{

"mcpServers": {

"litmus-mcp-server": {

"type": "sse",

"url": "http://localhost:8000/sse",

"headers": {

"EDGE_URL": "${EDGE_URL}",

"EDGE_API_CLIENT_ID": "${EDGE_API_CLIENT_ID}",

"EDGE_API_CLIENT_SECRET": "${EDGE_API_CLIENT_SECRET}",

"NATS_SOURCE": "${NATS_SOURCE}",

"NATS_PORT": "${NATS_PORT:-4222}",

"NATS_USER": "${NATS_USER}",

"NATS_PASSWORD": "${NATS_PASSWORD}",

"INFLUX_HOST": "${INFLUX_HOST}",

"INFLUX_PORT": "${INFLUX_PORT:-8086}",

"INFLUX_DB_NAME": "${INFLUX_DB_NAME:-tsdata}",

"INFLUX_USERNAME": "${INFLUX_USERNAME}",

"INFLUX_PASSWORD": "${INFLUX_PASSWORD}"

}

}

}

}

Cursor IDE

Add to ~/.cursor/mcp.json or .cursor/mcp.json:

{

"mcpServers": {

"litmus-mcp-server": {

"url": "http://<MCP_SERVER_IP>:8000/sse",

"headers": {

"EDGE_URL": "https://<LITMUSEDGE_IP>",

"EDGE_API_CLIENT_ID": "<oauth2_client_id>",

"EDGE_API_CLIENT_SECRET": "<oauth2_client_secret>",

"NATS_SOURCE": "<LITMUSEDGE_IP>",

"NATS_PORT": "4222",

"NATS_USER": "<access_token_username>",

"NATS_PASSWORD": "<access_token_from_litmusedge>",

"INFLUX_HOST": "<LITMUSEDGE_IP>",

"INFLUX_PORT": "8086",

"INFLUX_DB_NAME": "tsdata",

"INFLUX_USERNAME": "<datahub_username>",

"INFLUX_PASSWORD": "<datahub_password>"

}

}

}

}

VS Code / GitHub Copilot

Manual Configuration

In VS Code: Open User Settings (JSON) → Add:

{

"mcpServers": {

"litmus-mcp-server": {

"url": "http://<MCP_SERVER_IP>:8000/sse",

"headers": {

"EDGE_URL": "https://<LITMUSEDGE_IP>",

"EDGE_API_CLIENT_ID": "<oauth2_client_id>",

"EDGE_API_CLIENT_SECRET": "<oauth2_client_secret>",

"NATS_SOURCE": "<LITMUSEDGE_IP>",

"NATS_PORT": "4222",

"NATS_USER": "<access_token_username>",

"NATS_PASSWORD": "<access_token_from_litmusedge>",

"INFLUX_HOST": "<LITMUSEDGE_IP>",

"INFLUX_PORT": "8086",

"INFLUX_DB_NAME": "tsdata",

"INFLUX_USERNAME": "<datahub_username>",

"INFLUX_PASSWORD": "<datahub_password>"

}

}

}

}

Or use .vscode/mcp.json in your project.

Windsurf

Add to ~/.codeium/windsurf/mcp_config.json:

{

"mcpServers": {

"litmus-mcp-server": {

"url": "http://<MCP_SERVER_IP>:8000/sse",

"headers": {

"EDGE_URL": "https://<LITMUSEDGE_IP>",

"EDGE_API_CLIENT_ID": "<oauth2_client_id>",

"EDGE_API_CLIENT_SECRET": "<oauth2_client_secret>",

"NATS_SOURCE": "<LITMUSEDGE_IP>",

"NATS_PORT": "4222",

"NATS_USER": "<access_token_username>",

"NATS_PASSWORD": "<access_token_from_litmusedge>",

"INFLUX_HOST": "<LITMUSEDGE_IP>",

"INFLUX_PORT": "8086",

"INFLUX_DB_NAME": "tsdata",

"INFLUX_USERNAME": "<datahub_username>",

"INFLUX_PASSWORD": "<datahub_password>"

}

}

}

}

STDIO with Claude Desktop

This MCP server supports local connections with Claude Desktop and other applications via Standard file Input/Output (STDIO): https://modelcontextprotocol.io/legacy/concepts/transports

To use STDIO: Clone, edit config.py to enable STDIO, run the server as a local process, and update Claude Desktop MCP server configuration file to use the server:

Clone

# Clone

git clone https://github.com/litmusautomation/litmus-mcp-server.git

Set ENABLE_STDIO to 'true' in /src/config.py:

ENABLE_STDIO = os.getenv("ENABLE_STDIO", "true").lower() in ("true", "1", "yes")

Run the server

# Run using uv

uv sync

cd /path/to/litmus-mcp-server

uv run python3 src/server.py

# Otherwise

cd litmus-mcp-server

pip install -e .

python3 src/server.py

Add json server definision to your Claude Desktop config file:

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json - Linux:

~/.config/Claude/claude_desktop_config.json

{

"mcpServers": {

"litmus-mcp-server": {

"command": "/path/to/.venv/bin/python3",

"args": [

"/absolute/path/to/litmus-mcp-server/src/server.py"

],

"env": {

"PYTHONPATH": "/absolute/path/to/litmus-mcp-server/src",

"EDGE_URL": "https://<LITMUSEDGE_IP>",

"EDGE_API_CLIENT_ID": "<oauth2_client_id>",

"EDGE_API_CLIENT_SECRET": "<oauth2_client_secret>",

"NATS_SOURCE": "<LITMUSEDGE_IP>",

"NATS_PORT": "4222",

"NATS_USER": "<access_token_username>",

"NATS_PASSWORD": "<access_token_from_litmusedge>",

"INFLUX_HOST": "<LITMUSEDGE_IP>",

"INFLUX_PORT": "8086",

"INFLUX_DB_NAME": "tsdata",

"INFLUX_USERNAME": "<datahub_username>",

"INFLUX_PASSWORD": "<datahub_password>"

}

}

}

}

Tips

For development, use Python Virtual environments, for example to bridge mcp lib version diffs between dev clients like 'npx @modelcontextprotocol/inspector' & litmus-mcp-server

{

"mcpServers": {

"litmus-mcp-server": {

"command": "/absolute/path/to/litmus-mcp-server/.venv/bin/python",

"args": ["/absolute/path/to/litmus-mcp-server/src/server.py"],

"env": { /* same as above */ }

}

}

}

See claude_desktop_config_venv.example.json for the complete template.

Header Configuration Guide:

EDGE_URL: Litmus Edge base URL (include https://)EDGE_API_CLIENT_ID/EDGE_API_CLIENT_SECRET: OAuth2 credentials from Litmus EdgeNATS_SOURCE: Litmus Edge IP (no http/https)NATS_USER/NATS_PASSWORD: Access token credentials from System → Access Control → TokensINFLUX_HOST: Litmus Edge IP (no http/https)INFLUX_USERNAME/INFLUX_PASSWORD: DataHub user credentials

Available Tools

| Category | Function Name | Description |

|---|---|---|

| DeviceHub | get_litmusedge_driver_list | List supported Litmus Edge drivers (e.g., ModbusTCP, OPCUA, BACnet). |

get_devicehub_devices | List all configured DeviceHub devices with connection settings and status. | |

create_devicehub_device | Create a new device with specified driver and default configuration. | |

get_devicehub_device_tags | Retrieve all tags (data points/registers) for a specific device. | |

get_current_value_of_devicehub_tag | Read the current real-time value of a specific device tag. | |

| Device Identity | get_litmusedge_friendly_name | Get the human-readable name assigned to the Litmus Edge device. |

set_litmusedge_friendly_name | Update the friendly name of the Litmus Edge device. | |

| LEM Integration | get_cloud_activation_status | Check cloud registration and Litmus Edge Manager (LEM) connection status. |

| Docker Management | get_all_containers_on_litmusedge | List all Docker containers running on Litmus Edge Marketplace. |

run_docker_container_on_litmusedge | Deploy and run a new Docker container on Litmus Edge Marketplace. | |

| NATS Topics * | get_current_value_from_topic | Subscribe to a NATS topic and return the next published message. |

get_multiple_values_from_topic | Collect multiple sequential values from a NATS topic for trend analysis. | |

| InfluxDB ** | get_historical_data_from_influxdb | Query historical time-series data from InfluxDB by measurement and time range. |

| Digital Twins | list_digital_twin_models | List all Digital Twin models with ID, name, description, and version. |

list_digital_twin_instances | List all Digital Twin instances or filter by model ID. | |

create_digital_twin_instance | Create a new Digital Twin instance from an existing model. | |

list_static_attributes | List static attributes (fixed key-value pairs) for a model or instance. | |

list_dynamic_attributes | List dynamic attributes (real-time data points) for a model or instance. | |

list_transformations | List data transformation rules configured for a Digital Twin model. | |

get_digital_twin_hierarchy | Get the hierarchy configuration for a Digital Twin model. | |

save_digital_twin_hierarchy | Save a new hierarchy configuration to a Digital Twin model. |

Tool Use Notes

* NATS Topic Tools Requirements:

To use get_current_value_from_topic and get_multiple_values_from_topic, you must configure access control on Litmus Edge:

- Navigate to: Litmus Edge → System → Access Control → Tokens

- Create or configure an access token with appropriate permissions

- Provide the token in your MCP client configuration headers

** InfluxDB Tools Requirements:

To use get_historical_data_from_influxdb, you must allow InfluxDB port access:

- Navigate to: Litmus Edge → System → Network → Firewall

- Add a firewall rule to allow port 8086 on TCP

- Ensure InfluxDB is accessible from the MCP server host

Litmus Central

Download or try Litmus Edge via Litmus Central.

MCP server registries

© 2026 Litmus Automation, Inc. All rights reserved.

Serveurs connexes

kubectl MCP Plugin

An MCP server for kubectl, enabling AI assistants to interact with Kubernetes clusters through a standardized protocol.

VixMCP.Ai.Bridge

An MCP server that exposes VMware VIX operations for AI assistants and automation workflows.

MCP Weather Server

Provides hourly weather forecasts using the AccuWeather API.

Second Opinion MCP

Consult multiple AI models, including local, cloud, and enterprise services, to get diverse perspectives on a topic.

GooglePlayConsoleMcp

Let AI assistants manage your Play Store releases

Uberall MCP Server

Integrates with the Uberall API to manage business listings, locations, and social media presence.

AI Image MCP Server

AI-powered image analysis using OpenAI's Vision API.

Atlas Cloud MCP Server (Image / Video / LLM APIs)

A powerful MCP server for AI image, video, and LLM APIs. Integrate models like Seedance and Nano Banana into your workflow with a simple, unified interface powered by Atlas Cloud.

Coolify

Integrate with the Coolify API to manage your servers, applications, and databases.

Datadog MCP Server

Provides comprehensive Datadog monitoring capabilities through MCP clients. Requires Datadog API and Application keys.