MCP Context Server

Server providing persistent multimodal context storage for LLM agents.

MCP Context Server

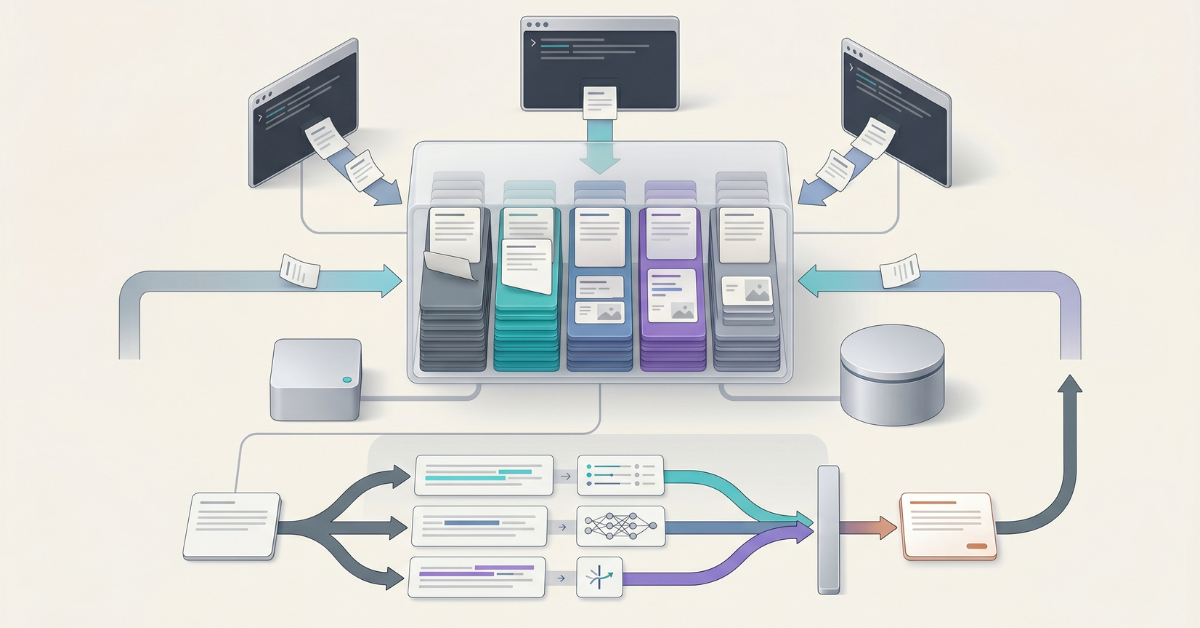

A high-performance Model Context Protocol (MCP) server providing persistent multimodal context storage for LLM agents. Built with FastMCP, this server enables seamless context sharing across multiple agents working on the same task through thread-based scoping.

Key Features

- Multimodal Context Storage: Store and retrieve both text and images

- Thread-Based Scoping: Agents working on the same task share context through thread IDs

- Flexible Metadata Filtering: Store custom structured data with any JSON-serializable fields and filter using 16 powerful operators

- Date Range Filtering: Filter context entries by creation timestamp using ISO 8601 format

- Tag-Based Organization: Efficient context retrieval with normalized, indexed tags

- Summary Generation: Optional automatic LLM-based summarization returned alongside truncated

text_contentin all search tool results for better agent context efficiency (enabled by default with Ollama) - Full-Text Search: Optional linguistic search with stemming, ranking, boolean queries (FTS5/tsvector), and cross-encoder reranking

- Semantic Search: Optional vector similarity search for meaning-based retrieval with cross-encoder reranking

- Hybrid Search: Optional combined FTS + semantic search using Reciprocal Rank Fusion (RRF) with cross-encoder reranking

- Cross-Encoder Reranking: Automatic result refinement using FlashRank cross-encoder models for improved search precision (enabled by default)

- Multiple Database Backends: Choose between SQLite (default, zero-config) or PostgreSQL (high-concurrency, production-grade)

- High Performance: WAL mode (SQLite) / MVCC (PostgreSQL), strategic indexing, and async operations

- MCP Standard Compliance: Works with Claude Code, LangGraph, and any MCP-compatible client

- Production Ready: Comprehensive test coverage, type safety, and robust error handling

Connecting to Your AI Assistant

The fastest way to connect the MCP Context Server to Claude Code is the one-command Docker bootstrap.

For step-by-step instructions, prerequisites, troubleshooting, and update/uninstall commands, see the Connecting to Your AI Assistant Guide.

Environment Configuration

The server is fully configured via environment variables, supporting core settings, transport, authentication, embedding providers, summary generation, search features, database tuning, and more. Variables can be set in your MCP client configuration, in a .env file, or directly in the shell.

For the complete reference of all environment variables with types, defaults, constraints, and descriptions, see the Environment Variables Reference.

Summary Generation

Summary generation automatically creates concise LLM-based summaries for each stored context entry. Summaries are returned in the summary field of all search tool results alongside truncated text_content, providing dense, informative summaries that help agents determine relevance without fetching full entries.

For detailed instructions including all providers (Ollama, OpenAI, Anthropic), model selection, and custom prompt configuration, see the Summary Generation Guide.

Semantic Search

For detailed instructions on enabling optional semantic search with multiple embedding providers (Ollama, OpenAI, Azure, HuggingFace, Voyage), see the Semantic Search Guide.

Full-Text Search

For full-text search with linguistic processing, stemming, ranking, and boolean queries, see the Full-Text Search Guide.

Hybrid Search

For combined FTS + semantic search using Reciprocal Rank Fusion (RRF), see the Hybrid Search Guide.

Metadata Filtering

For comprehensive metadata filtering including 16 operators, nested JSON paths, and performance optimization, see the Metadata Guide.

Database Backends

The server supports multiple database backends, selectable via the STORAGE_BACKEND environment variable. SQLite (default) provides zero-configuration local storage perfect for single-user deployments. PostgreSQL offers high-performance capabilities with 10x+ write throughput for multi-user and high-traffic deployments.

For detailed configuration instructions including PostgreSQL setup with Docker, Supabase integration, connection methods, and troubleshooting, see the Database Backends Guide.

API Reference

The MCP Context Server exposes 13 MCP tools for context management:

Core Operations: store_context, search_context, get_context_by_ids, delete_context, update_context, list_threads, get_statistics

Search Tools: semantic_search_context, fts_search_context, hybrid_search_context

Batch Operations: store_context_batch, update_context_batch, delete_context_batch

For complete tool documentation including parameters, return values, filtering options, and examples, see the API Reference.

Docker Deployment

For production deployments with HTTP transport and container orchestration, Docker Compose configurations are available for SQLite, PostgreSQL, and external PostgreSQL (Supabase). See the Docker Deployment Guide for setup instructions and client connection details.

Kubernetes Deployment

For Kubernetes deployments, a Helm chart is provided with configurable values for different environments. See the Helm Deployment Guide for installation instructions, or the Kubernetes Deployment Guide for general Kubernetes concepts.

Authentication

For HTTP transport deployments requiring authentication, see the Authentication Guide for bearer token configuration.

Getting Help

- Bug reports: Report a bug

- Feature requests: Suggest a feature

- Documentation issues: Report a docs issue

- Questions: Ask a question

Verwandte Server

Alpha Vantage MCP Server

SponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

MCP Installer

Set up MCP servers in Claude Desktop

VSCode MCP

Interact with VSCode through the Model Context Protocol, enabling AI agents to perform development tasks.

Galley MCP Server

Integrates Galley's GraphQL API with MCP clients. It automatically introspects the GraphQL schema for seamless use with tools like Claude and VS Code.

CSS Tutor

Provides personalized updates and tutoring on CSS features using the OpenRouter API.

MCP Documentation Server

An AI-powered documentation server for code improvement and management, with Claude and Brave Search integration.

ENC Charts MCP Server

Programmatically access and parse NOAA Electronic Navigational Charts (ENC) in S-57 format.

SynapseForge

A server for systematic AI experimentation and prompt A/B testing.

Nessus MCP Server

An MCP server for interacting with the Tenable Nessus vulnerability scanner.

GraphQL API Explorer

Provides intelligent introspection and exploration capabilities for any GraphQL API.

MCP Builder

A Python-based server to install and configure other MCP servers from PyPI, npm, or local directories.