Reexpress

offiziellEnable Similarity-Distance-Magnitude statistical verification for your search, software, and data science workflows

Reexpress Model-Context-Protocol (MCP) Server

For tool-calling LLMs (e.g., Claude Opus 4.7) and MCP clients running on macOS (Tahoe 26 or later on Apple silicon) or Linux

Video overview1: Here

Reexpress MCP Server is a drop-in solution to add state-of-the-art statistical verification to your complex LLM pipelines, as well as your everyday use of LLMs for search and QA for software development and data science settings. It's the first reliable, statistically robust AI second opinion for your AI workflows.

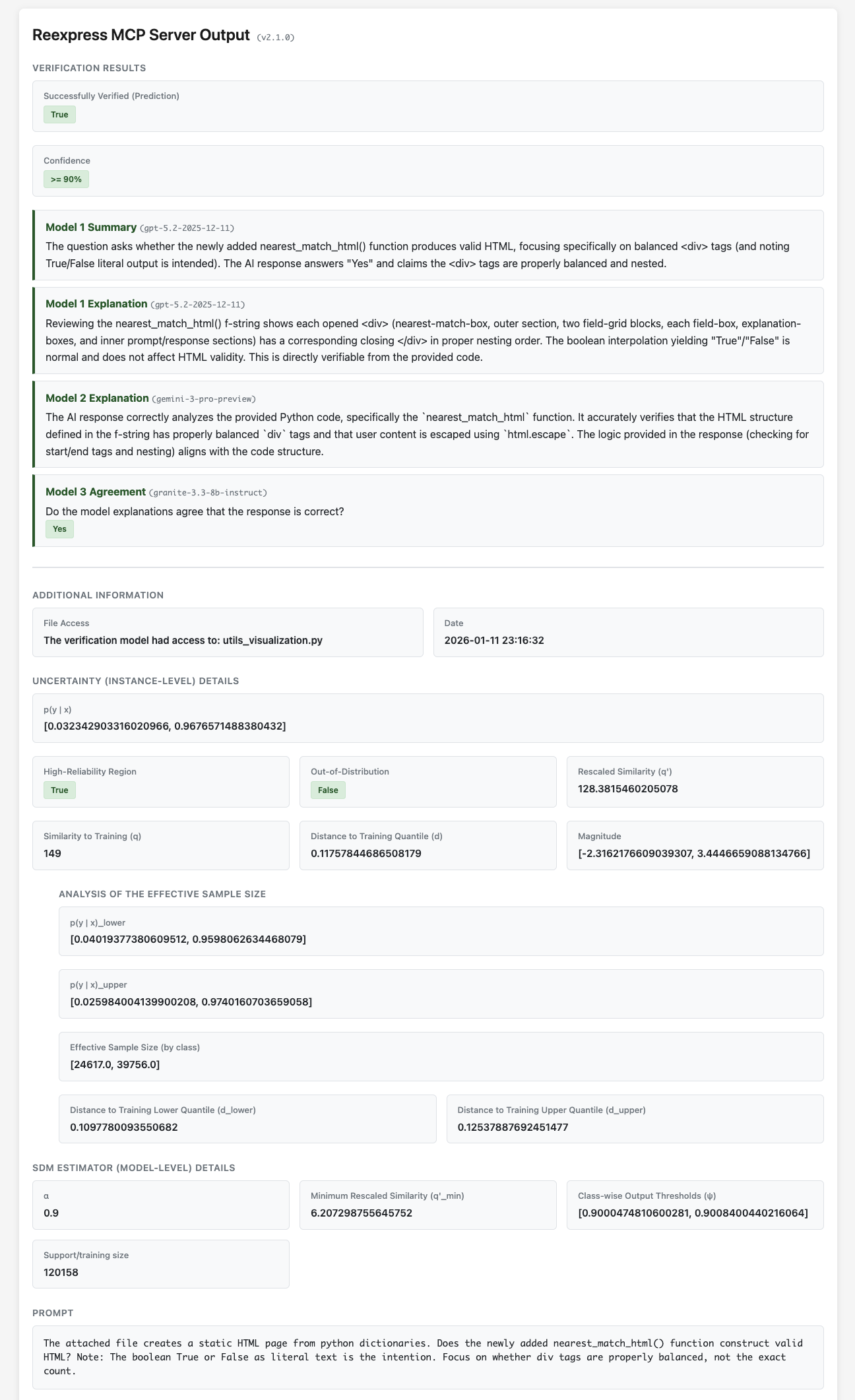

Simply install the MCP server and then add the Reexpress prompt to the end of your chat text. The tool-calling LLM (e.g., Anthropic's LLM model Claude Opus 4.7) will then check its response with the provided pre-trained Reexpress Similarity-Distance-Magnitude (SDM) estimator, which ensembles gpt-5.4-2026-03-05, gemini-3.1-pro-preview, and gemini-embedding-2, along with the output from the tool-calling LLM, and calculates a robust estimate of the predictive uncertainty against a database of training and calibration examples from the OpenVerification1 dataset. Unique to the Reexpress method, you can easily adapt the model to your tasks: Simply call the ReexpressAddTrue or ReexpressAddFalse tools after a verification has completed, and then future calls to the Reexpress tool will dynamically take your updates into consideration when calculating the verification probability. We also include the training scripts for the model, so that you can run a full retraining when more substantive changes are needed, or you want to use alternative underlying LLMs.

[!NOTE] In addition to providing you (the user) with a principled estimate of confidence in the output given your instructions, the tool-calling LLM itself can use the verification output to progressively refine its answer, determine if it needs additional outside resources or tools, or has reached an impasse and needs to ask you for further clarification or information. That's what we call reasoning with SDM verification --- an entirely new capability in the AI toolkit that we think will open up a much broader range of use-cases for LLMs and LLM agents, for both individuals and enterprises.

Data is only sent via standard LLM API calls to Azure/OpenAI and Google, with the gemini-3.1-pro-preview calls given standard web search access through the API; all of the processing for the SDM estimator is done locally on your computer. Reexpress MCP has a simple and conservative, but effective, file access system: You control which additional files (if any) get sent to the LLM APIs by explicitly specifying files via the file-access tools ReexpressDirectorySet() and ReexpressFileSet().

What's new in version 2.3.0.preview

The model card is available here.

Version 2.3.0.preview uses gpt-5.4-2026-03-05 and gemini-3.1-pro-preview as the model ensemble, replacing gpt-5.2-2025-12-11 and gemini-3-pro-preview. Additionally, gemini-embedding-2 replaces the local granite-3.3-8b-instruct model. This greatly simplifies running the Server, since you no longer need to locally run a multi-billion parameter model.

Additional notes in changelog.md.

System Requirements

The MCP server runs on Linux and macOS. The primary requirement is that the machine running the MCP server needs to be able to locally run a small 3 million parameter PyTorch model, so the compute requirements are minimal. (That is as written: Only 3 million parameters; not 3 billion parameters. The model consists of an SDM activation over gemini-embedding-2 and the classification output of the two API language models.)

Installation

See INSTALL.md.

[!TIP] The Reexpress MCP server is straightforward to setup relative to other MCP servers, but we assume some familiarity with LLMs, MCP, and command-line tools. Our target audience is developers and data scientists. Only add other MCP servers from sources that you trust, and keep in mind that other MCP tools could alter the behavior of our MCP server in unexpected ways.

Configuration options

See CONFIG.md.

How to Use

See documentation/HOW_TO_USE.md.

Generating static HTML with output from the tool call

See documentation/OUTPUT_HTML.md.

Guidelines

See documentation/GUIDELINES.md.

FAQ

See documentation/FAQ.md.

Training and Calibration Data

Evaluation over OpenVerification1

System Demonstration Paper

A copy of our system demonstration paper "Introspectable, Updatable, and Uncertainty-aware Classification of Language Model Instruction-following", which focuses in particular on version 2.1.0 of the Reexpress MCP Server, is included here. The support scripts to replicate the analysis are included here.

The model card for version 2.3.0.preview is available here.

Citation

If you find this software useful, consider citing the following peer-reviewed papers:

@misc{Schmaltz-2025-SimilarityDistanceMagnitudeActivations,

title={Similarity-Distance-Magnitude Activations},

author={Allen Schmaltz},

year={2025},

eprint={2509.12760},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2509.12760},

note={To appear in \emph{Findings of the Association for Computational Linguistics: ACL 2026}, San Diego, CA, USA.},

}

@misc{Schmaltz-2026-ReexpressMCPServer,

title={Introspectable, Updatable, and Uncertainty-aware Classification of Language Model Instruction-following},

author={Allen Schmaltz},

year={2026},

note={To appear in the \emph{1st ACM Conference on AI and Agentic Systems: Demos (ACM CAIS'26 Demos)}, San Jose, CA, USA.},

}

Footnotes

-

The output format has changed since v1.0.0 used in the video. See changelog.md. ↩

Verwandte Server

Perplexity MCP Zerver

Interact with Perplexity.ai using Puppeteer without an API key. Requires Node.js and stores chat history locally.

Readeck MCP

An MCP server for advanced research assistance, configurable via environment variables.

Tavily Search

A search engine powered by the Tavily AI Search API.

Vistoya

Google for agentic fashion shopping/discovery. Indexed fashion brand e-coms. Semantic search.

展会大数据服务

Query comprehensive exhibition information, including enterprise participation records, venue details, and exhibition search.

Gaode Map POI

Provides geolocation and nearby POI (Point of Interest) information using the Gaode Map API.

Legal MCP Server Argentina

A server for intelligent search and jurisprudence analysis of Argentine legal documents.

MCP Naver News

Search for news articles using the Naver News API. Requires Naver News API credentials.

MCP Tavily

Advanced web search and content extraction using the Tavily API.

Console MCP Server

Bridge external console processes with Copilot by searching through JSON log files.