WolframAlpha LLM

Answer math and other queries using the WolframAlpha LLM API.

WolframAlpha LLM MCP Server

A Model Context Protocol (MCP) server that provides access to WolframAlpha's LLM API. https://products.wolframalpha.com/llm-api/documentation

Features

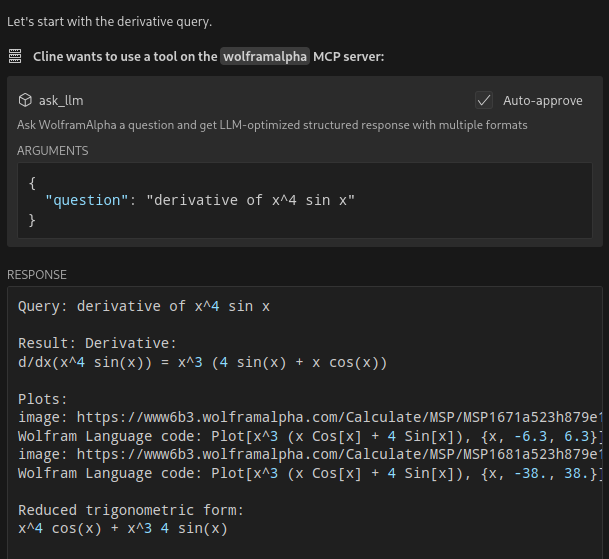

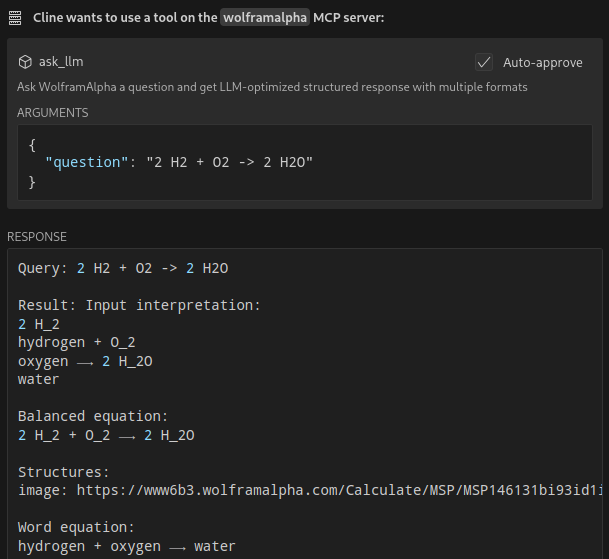

- Query WolframAlpha's LLM API with natural language questions

- Answer complicated mathematical questions

- Query facts about science, physics, history, geography, and more

- Get structured responses optimized for LLM consumption

- Support for simplified answers and detailed responses with sections

Available Tools

ask_llm: Ask WolframAlpha a question and get a structured llm-friendly responseget_simple_answer: Get a simplified answervalidate_key: Validate the WolframAlpha API key

Installation

git clone https://github.com/Garoth/wolframalpha-llm-mcp.git

npm install

Configuration

-

Get your WolframAlpha API key from developer.wolframalpha.com

-

Add it to your Cline MCP settings file inside VSCode's settings (ex. ~/.config/Code/User/globalStorage/saoudrizwan.claude-dev/settings/cline_mcp_settings.json):

{

"mcpServers": {

"wolframalpha": {

"command": "node",

"args": ["/path/to/wolframalpha-mcp-server/build/index.js"],

"env": {

"WOLFRAM_LLM_APP_ID": "your-api-key-here"

},

"disabled": false,

"autoApprove": [

"ask_llm",

"get_simple_answer",

"validate_key"

]

}

}

}

Development

Setting Up Tests

The tests use real API calls to ensure accurate responses. To run the tests:

-

Copy the example environment file:

cp .env.example .env -

Edit

.envand add your WolframAlpha API key:WOLFRAM_LLM_APP_ID=your-api-key-hereNote: The

.envfile is gitignored to prevent committing sensitive information. -

Run the tests:

npm test

Building

npm run build

License

MIT

Related Servers

Fuel Network & Sway Language

Semantic search for Fuel Network and Sway Language documentation using a local vector database.

Google Images Search

Search for Google images, view results, and download them directly within your IDE.

Marginalia Search

A search engine for non-commercial content and hidden gems of the internet.

StatPearls

Fetches peer-reviewed medical and disease information from StatPearls.

GPT Researcher

Conducts autonomous, in-depth research by exploring and validating multiple sources to provide relevant and up-to-date information.

AgentBridge

A specialized gateway for AI agents to fetch and parse Chinese web content into clean Markdown. Pay-per-fetch via x402.

Grok Search

Comprehensive web, news, and social media search and analysis using xAI's Grok API.

Yandex Search

A web search server that uses the Yandex Search API.

Tavily Search

Perform web searches using the Tavily Search API.

avr-docs-mcp

This MCP (Model Context Protocol) server provides integration with Wiki.JS for searching and listing pages from Agent Voice Response Wiki.JS instance.