ArchiveNet

A context insertion and search server for Claude Desktop and Cursor IDE, using configurable API endpoints.

ArchiveNET

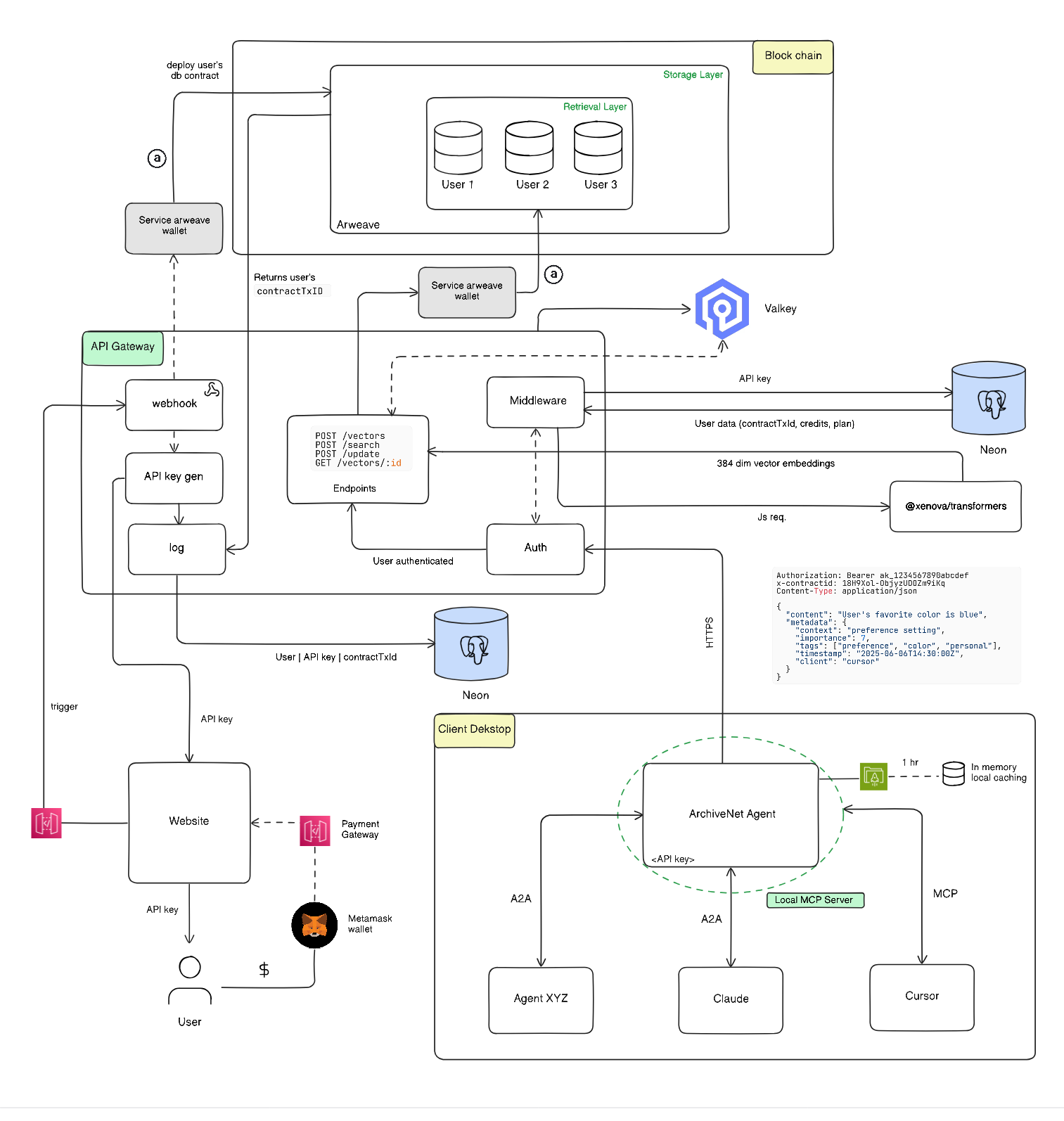

Where AI memories live forever - A decentralized semantic memory platform powered by blockchain and vector search

ArchiveNET is a revolutionary decentralized memory management platform that combines the power of AI embeddings with blockchain permanence. Built on Arweave, it provides enterprise-grade semantic search capabilities through advanced vector database technology, enabling applications to store, search, and retrieve contextual information with unprecedented permanence and accuracy.

Key Features

- Semantic Memory: AI-powered contextual memory storage and retrieval

- Blockchain Persistence: Permanent storage on Arweave blockchain

- Vector Search: State-of-the-art HNSW algorithm for similarity search

- High Performance: O(log N) search complexity for millions of vectors

- Decentralized: No single point of failure or censorship

- Rich Metadata: Comprehensive metadata support for enhanced search

- Enterprise-Ready: Production-grade API with authentication and monitoring

Architecture

ArchiveNET is a comprehensive monorepo consisting of four main components:

Eizen - Vector Database Engine

The world's first decentralized vector engine built on Arweave blockchain, implementing the Hierarchical Navigable Small Worlds (HNSW) algorithm for approximate nearest neighbor search.

Key Features:

- HNSW algorithm with O(log N) complexity

- Blockchain-based persistence via HollowDB

- Protobuf encoding for efficient storage

- Database-agnostic interface

- Handles millions of high-dimensional vectors

API - Backend Service

A robust Express.js API service providing semantic memory management with AI-powered search capabilities.

Stack:

- Express.js with TypeScript

- Neon PostgreSQL with Drizzle ORM

- EizenDB for vector operations

- Redis for caching

- JWT authentication

- Comprehensive validation with Zod

Frontend - Web Interface

A modern Next.js application providing an intuitive interface for memory management and search operations.

Features:

- React-based UI with TypeScript

- Real-time search capabilities

- Memory visualization

- User dashboard

- Responsive design

Eva - MCP Agent

The central Model Context Protocol (MCP) server that orchestrates memory operations and provides intelligent context management.

Quick Start

Prerequisites

- Node.js 18+

- Docker & Docker Compose

- PostgreSQL database

- Redis server

- Arweave wallet

Installation

-

Clone the repository:

git clone https://github.com/s9swata/archivenet.git cd ArchiveNET -

Start with Docker Compose:

docker-compose up -d -

Manual setup (alternative):

# API Setup cd API npm install npm run build npx drizzle-kit push npm run dev # Frontend Setup cd ../client npm install npm run dev

Configuration

Create .env files in respective directories:

API/.env:

DATABASE_URL=your_postgres_url

REDIS_URL=redis://localhost:6379

JWT_SECRET=your_jwt_secret

ARWEAVE_WALLET_PATH=./data/wallet.json

API Integration

// Store a memory

const response = await fetch("/api/memories", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

content: "Project discussion about AI integration",

metadata: { project: "AI-Platform", priority: "high" },

}),

});

// Search memories

const searchResults = await fetch("/api/memories/search", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

query: "AI project discussions",

limit: 10,

}),

});

Contributing

- Fork the repository

- Create a feature branch (

git checkout -b feature/amazing-feature) - Commit your changes (

git commit -m 'Add amazing feature') - Push to the branch (

git push origin feature/amazing-feature) - Open a Pull Request

Documentation

- Developer Guide - Comprehensive API documentation

- Backend Integration - Backend implementation examples

- Eizen Engine - Vector database engine details

- HNSW Guide - Algorithm implementation details

License

This project is licensed under the MIT License - see the LICENSE file for details.

Made with ❤️ for the decentralized AI future

Server Terkait

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

Linkinator

A Model Context Protocol (MCP) server that provides link checking capabilities using linkinator. This allows AI assistants like Claude to scan webpages and local files for broken links.

Kai

Kai provides a bridge between large language models (LLMs) and your Kubernetes clusters, enabling natural language interaction with Kubernetes resources. The server exposes a comprehensive set of tools for managing clusters, namespaces, pods, deployments, services, and other Kubernetes resources

Authless MCP Server Example

An example of a remote MCP server deployable on Cloudflare Workers without authentication.

GhostQA

GhostQA sends AI personas through your application — they look at the screen, decide what to do, and interact like real humans. No test scripts. No selectors. You describe personas and journeys in YAML, and GhostQA handles the rest.

SCAST

Analyzes source code to generate UML and flow diagrams with AI-powered explanations.

Socket

Scan dependencies for vulnerabilities and security issues using the Socket API.

atlassian-browser-mcp

rowser-backed MCP wrapper for mcp-atlassian with Playwright SSO auth. Enables AI tools to access Atlassian Server/Data Center instances behind corporate SSO (Okta, SAML, ADFS) where API tokens are not available.

NovaCV

An MCP server for accessing the NovaCV resume service API.

Random Number

Provides LLMs with essential random generation abilities, built entirely on Python's standard library.

MCP Storybook Image Generator

Generate storybook images for children's stories using Google's Gemini AI.