Locust MCP Server

An MCP server for running Locust load tests. Configure test parameters like host, users, and spawn rate via environment variables.

🚀 ⚡️ locust-mcp-server

A Model Context Protocol (MCP) server implementation for running Locust load tests. This server enables seamless integration of Locust load testing capabilities with AI-powered development environments.

✨ Features

- Simple integration with Model Context Protocol framework

- Support for headless and UI modes

- Configurable test parameters (users, spawn rate, runtime)

- Easy-to-use API for running Locust load tests

- Real-time test execution output

- HTTP/HTTPS protocol support out of the box

- Custom task scenarios support

🔧 Prerequisites

Before you begin, ensure you have the following installed:

- Python 3.13 or higher

- uv package manager (Installation guide)

📦 Installation

- Clone the repository:

git clone https://github.com/qainsights/locust-mcp-server.git

- Install the required dependencies:

uv pip install -r requirements.txt

- Set up environment variables (optional):

Create a

.envfile in the project root:

LOCUST_HOST=http://localhost:8089 # Default host for your tests

LOCUST_USERS=3 # Default number of users

LOCUST_SPAWN_RATE=1 # Default user spawn rate

LOCUST_RUN_TIME=10s # Default test duration

🚀 Getting Started

- Create a Locust test script (e.g.,

hello.py):

from locust import HttpUser, task, between

class QuickstartUser(HttpUser):

wait_time = between(1, 5)

@task

def hello_world(self):

self.client.get("/hello")

self.client.get("/world")

@task(3)

def view_items(self):

for item_id in range(10):

self.client.get(f"/item?id={item_id}", name="/item")

time.sleep(1)

def on_start(self):

self.client.post("/login", json={"username":"foo", "password":"bar"})

- Configure the MCP server using the below specs in your favorite MCP client (Claude Desktop, Cursor, Windsurf and more):

{

"mcpServers": {

"locust": {

"command": "/Users/naveenkumar/.local/bin/uv",

"args": [

"--directory",

"/Users/naveenkumar/Gits/locust-mcp-server",

"run",

"locust_server.py"

]

}

}

}

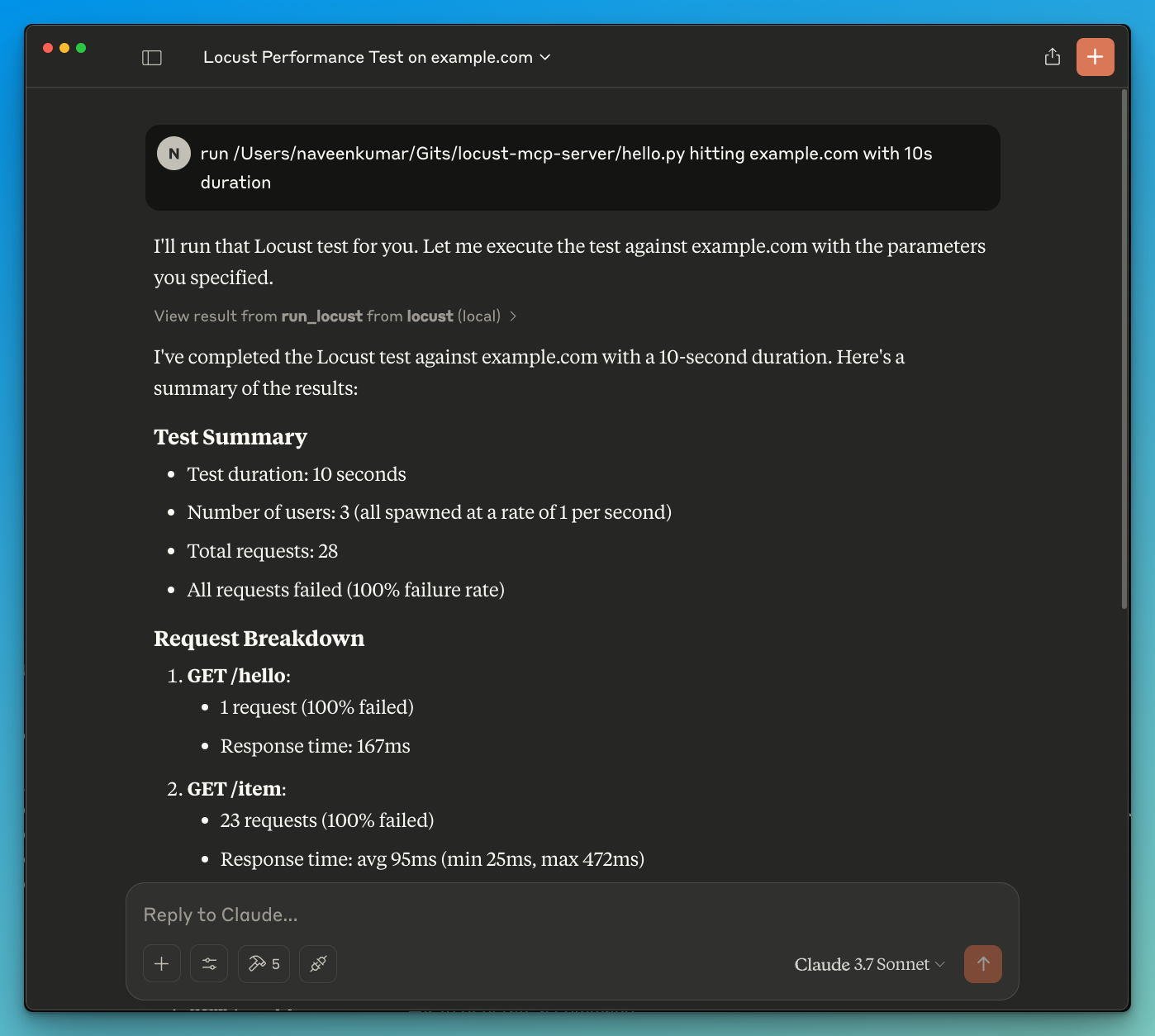

- Now ask the LLM to run the test e.g.

run locust test for hello.py. The Locust MCP server will use the following tool to start the test:

run_locust: Run a test with configurable options for headless mode, host, runtime, users, and spawn rate

📝 API Reference

Run Locust Test

run_locust(

test_file: str,

headless: bool = True,

host: str = "http://localhost:8089",

runtime: str = "10s",

users: int = 3,

spawn_rate: int = 1

)

Parameters:

test_file: Path to your Locust test scriptheadless: Run in headless mode (True) or with UI (False)host: Target host to load testruntime: Test duration (e.g., "30s", "1m", "5m")users: Number of concurrent users to simulatespawn_rate: Rate at which users are spawned

✨ Use Cases

- LLM powered results analysis

- Effective debugging with the help of LLM

🤝 Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

📄 License

This project is licensed under the MIT License - see the LICENSE file for details.

Server Terkait

Alpha Vantage MCP Server

sponsorAccess financial market data: realtime & historical stock, ETF, options, forex, crypto, commodities, fundamentals, technical indicators, & more

Zaim API

A server template for interacting with APIs that require an API key, using the Zaim API as an example.

AppHandoff MCP Server

One shared context layer for AI agents and humans — live API specs, DB schemas, and versioned contracts across repos so every agent and teammate works from the same source of truth.

Smart AI Bridge

Intelligent Al routing and integration platform for seamless provider switching

Squads MCP

A secure MCP implementation for Squads multisig management on the Solana blockchain.

Remote MCP Server (Authless)

An example of a remote MCP server without authentication, deployable on Cloudflare Workers.

agent-friend

Universal tool adapter — @tool decorator exports Python functions to OpenAI, Claude, Gemini, MCP, JSON Schema. Audit token costs.

Tauri Development MCP Server

Build, test, and debug mobile and desktop apps with the Tauri framework faster with automated UI interaction, screenshots, DOM state, and console logs from your app under development.

RubyGems Package Info

Fetches comprehensive information about Ruby gems from RubyGems.org, including READMEs, metadata, and search functionality.

Django MCP Server

A Django extension to enable AI agents to interact with Django apps through the Model Context Protocol.

KiCAD-MCP-Server

KiCAD MCP is a Model Context Protocol (MCP) implementation that enables Large Language Models (LLMs) like Claude to directly interact with KiCAD for printed circuit board design.